American technology firm Clearview AI violated Canadian privacy laws by collecting photos of Canadians without their knowledge or consent, an investigation by four of Canada’s privacy commissioners has found.

The report found that Clearview’s technology created a significant risk to individuals by allowing law enforcement and companies to match photos against its database of more than three billion images, including Canadians and children.

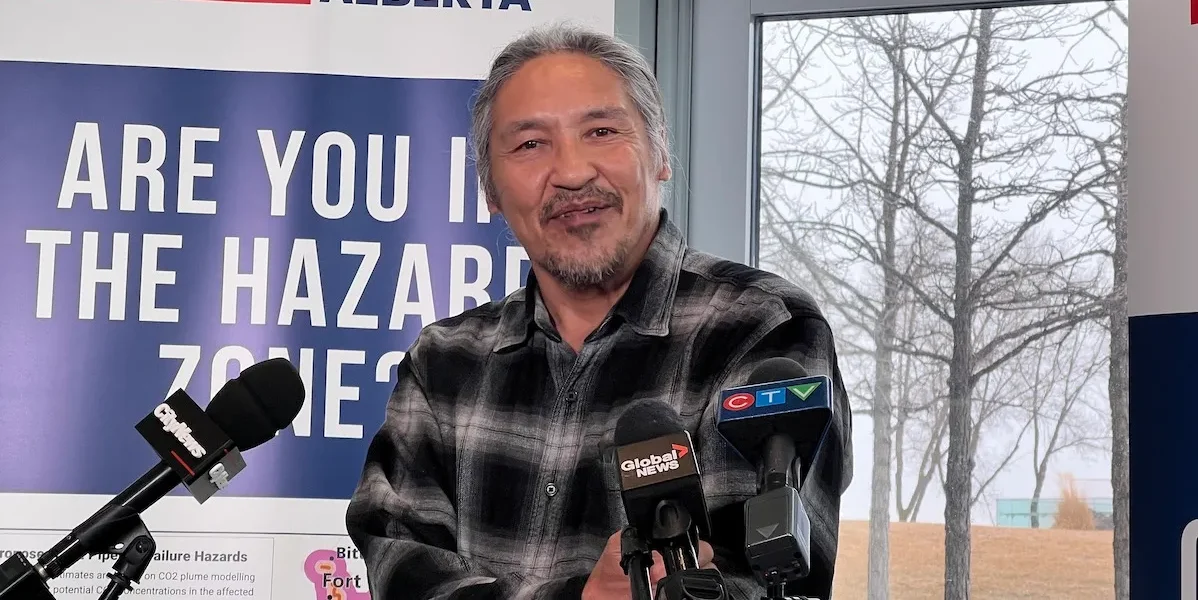

“What Clearview does is mass surveillance and it is illegal,” federal privacy commissioner Daniel Therrien said in a statement. “It is completely unacceptable for millions of people who will never be implicated in any crime to find themselves continually in a police lineup.

“Yet the company continues to claim its purposes were appropriate, citing the requirement under federal privacy law that its business needs to be balanced against privacy rights.”

Delete photos of Canadians in database, commissioners say

The commissioners called for Clearview to stop offering its technology in Canada, stop collecting images of Canadians and to delete the photos of Canadians it had already collected in its database.

If the company refuses to follow the recommendations, the four privacy commissioners will “pursue other actions available under their respective acts to bring Clearview into compliance with Canadian laws,” the statement said.

Doug Mitchell, lawyer for Clearview AI, said the company simply collects public data in the same way as companies like Google.

“Clearview AI’s technology is not available in Canada and it does not operate in Canada. In any event, Clearview AI only collects public information from the Internet which is explicitly permitted under PIPEDA,” Mitchell wrote in a statement, issued minutes after the report was made public.

PIPEDA is Canada’s Personal Information Protection and Electronic Documents Act

“The Federal Court of Appeal has previously ruled in the privacy context that publicly available information means exactly what it says: ‘available or accessible by the citizenry at large.'” Mitchell wrote. “There is no reason to apply a different standard here.”

Privacy experts say technology could be misused

The report by four of Canada’s privacy commissioners comes nearly seven months after Clearview agreed to no longer make its controversial facial recognition software available in Canada. A number of Canadian law enforcement agencies, including the RCMP, Toronto and Calgary police, had been using the advanced technology to help identify perpetrators and victims of crimes.

With the technology, police could input the picture of a victim or suspected criminal and compare it with billions of photos it had collected from the internet and social media accounts.

However, privacy experts expressed concerns that the technology could be misused.

While police forces said last summer that they stopped using Clearview AI, questions remained about what would happen to the personal information of Canadians that the company had already collected and whether the company would stop collecting personal information belonging to Canadians.

‘Proud of our record in assisting Canadian law enforcement’

In July, company CEO Hoan Ton-That said the company had ceased its operations in Canada. He said Canadians would be able to opt out of Clearview’s search results.

“We are proud of our record in assisting Canadian law enforcement to solve some of the most heinous crimes, including crimes against children,” Ton-That said in a statement at the time. “We will continue to co-operate with the (Office of the Privacy Commissioner) on other related issues.”

In the report, the federal privacy commissioner along with his colleagues in Quebec, British Columbia and Alberta found that Clearview collected images in Canada and actively marketed its services to Canadian police forces. The RCMP paid for its services, and there were 48 accounts created for Canadian law enforcement agencies.

In a separate investigation, the federal privacy commissioner’s office is probing the way the RCMP used Clearview’s technology.

More later…..