Introduction: The Growing Concern Over Facial Recognition Bias

As facial recognition technology (FRT) continues to expand across Canada, a growing body of research and civil rights advocacy highlights a troubling reality: this technology is not neutral. Studies have shown that FRT exhibits significant racial bias, disproportionately misidentifying Black, Indigenous, and other racialized communities. Despite its promise of enhancing security and efficiency, its deployment in policing, border control, and private sector applications has raised alarms about systemic racism, privacy violations, and racial profiling.

“This technology is inherently flawed,” says Dr. Ruha Benjamin, author of Race After Technology. “It operates within a society that already discriminates against people of color, so it only amplifies existing biases.”

This article explores how facial recognition technology in Canada has perpetuated racial bias, examines its impact on racialized communities, and discusses what can be done to ensure accountability and fairness in its use.

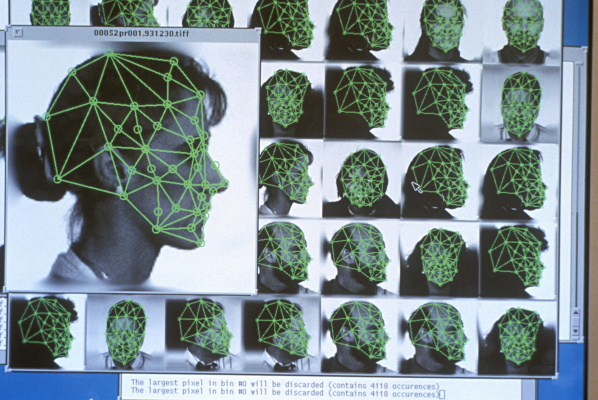

The Science of Bias: Why Facial Recognition Fails Racialized People

Multiple studies have found that facial recognition software is significantly less accurate for Black, Indigenous, and darker-skinned individuals compared to white faces. In 2019, a study from the National Institute of Standards and Technology (NIST) found that facial recognition algorithms were up to 100 times more likely to misidentify Black and Asian individuals than white individuals.

“The technology was trained primarily on white faces,” explains Dr. Timnit Gebru, a leading AI researcher and former Google AI ethicist. “So when the system encounters a non-white face, it struggles to make accurate identifications. This isn’t just a glitch—it’s systemic discrimination.”

In Canada, the issue is no different. A 2021 investigation by the Privacy Commissioner of Canada found that law enforcement agencies across the country had used flawed facial recognition tools without proper oversight, disproportionately targeting racialized individuals.

How Canadian Law Enforcement Uses Facial Recognition Against Racialized Communities

Despite concerns about accuracy, several Canadian police forces, including the RCMP and Toronto Police Service, have used facial recognition software to identify suspects and monitor public gatherings.

Civil rights groups argue that the technology is being deployed disproportionately in racialized and low-income communities, exacerbating existing issues of racial profiling.

“We already know that Black and Indigenous people are over-policed in Canada,” says Desmond Cole, journalist and author of The Skin We’re In. “Facial recognition just gives police another tool to surveil and criminalize us. It’s digital carding.”

One of the most controversial cases involved the Toronto Police Service (TPS) using Clearview AI, a widely criticized facial recognition tool that scrapes images from social media without consent. An investigation revealed that TPS officers used Clearview AI more than 3,400 times, often without warrants or transparency.

“This was a massive privacy violation,” says Brenda McPhail, Director of Privacy, Technology & Surveillance at the Canadian Civil Liberties Association (CCLA). “And because these tools have such high error rates for racialized people, it means innocent individuals are being wrongfully identified and subjected to police scrutiny.”

Wrongful Arrests and the Human Cost of Misidentification

One of the most devastating consequences of biased facial recognition technology is the risk of wrongful arrests. Several cases in the U.S. have already demonstrated the catastrophic impact of FRT errors, with Black men disproportionately arrested due to misidentifications.

Although no wrongful arrests due to FRT have been confirmed in Canada, privacy advocates warn that it’s only a matter of time.

“The technology is flawed, but it’s still being used to make life-altering decisions,” says Dr. Kate Robertson, an AI ethics researcher at the University of Toronto’s Citizen Lab. “If we don’t regulate it now, the first wrongful arrest in Canada is just around the corner.”

Facial Recognition and Border Control: Targeting Refugees and Migrants

Beyond policing, facial recognition technology is increasingly used at Canada’s borders, raising serious concerns about discrimination against refugees and migrants.

A 2022 Canadian Border Services Agency (CBSA) pilot project used facial recognition scanners to verify travelers’ identities. However, reports emerged that racialized individuals were far more likely to be flagged for additional screening.

“The border is already a site of systemic racism,” says Harsha Walia, activist and author of Border and Rule. “Facial recognition just automates the discrimination. Refugees and immigrants from the Global South are facing even more scrutiny and delays.”

The Fight for Regulation: Civil Rights Groups Push Back

In response to public outcry, civil rights groups and privacy advocates have been demanding stricter regulation—or outright bans—on the use of facial recognition in Canada.

“The technology is fundamentally flawed and should not be used until it can be proven to be bias-free,” says Michael Bryant, Executive Director of the Canadian Civil Liberties Association.

In 2023, Montreal and Vancouver became the first Canadian cities to ban facial recognition technology in policing, following similar moves in major U.S. cities like San Francisco and Boston.

“We need nationwide protections,” says NDP MP Matthew Green, a vocal advocate for stronger digital rights legislation. “Facial recognition is too dangerous to be left unregulated.”

Looking Ahead: The Future of Facial Recognition in Canada

With growing public awareness and legal challenges, the future of facial recognition technology in Canada remains uncertain. Some tech companies are now rethinking their use of FRT, with IBM and Microsoft scaling back their facial recognition programs due to bias concerns.

Meanwhile, organizations like the Ontario Human Rights Commission (OHRC) are calling for strict racial impact assessments before deploying any new AI-driven surveillance tools.

“If Canada is serious about racial justice, we need to stop using tools that harm racialized communities,” says Dr. Safiya Umoja Noble, author of Algorithms of Oppression. “Facial recognition is not just a technical issue—it’s a human rights issue.”

Conclusion: The Need for Immediate Action

Facial recognition technology in Canada is at a crossroads. While it offers potential benefits for security and identity verification, its racial biases and discriminatory applications make it a dangerous tool when left unregulated.

As history has shown, technologies that reinforce racial inequality must be challenged. From the days of carding and racial profiling to the digital surveillance of today, racialized communities have always borne the brunt of unjust policing tactics.

“We cannot afford to let facial recognition technology become the new frontier of systemic racism,” says Desmond Cole. “The time to act is now.”

Canada must make a choice: Will we allow this technology to deepen racial inequalities, or will we demand transparency, accountability, and justice?

The future of civil rights in the digital age depends on the answer.

References:

- National Institute of Standards and Technology (NIST), Bias in Facial Recognition Algorithms

- Timnit Gebru, AI Ethics and Racial Bias in Facial Recognition

- Privacy Commissioner of Canada, Report on Facial Recognition and Law Enforcement

- Desmond Cole, The Skin We’re In

- Brenda McPhail, CCLA Statement on Facial Recognition in Policing

- Ontario Human Rights Commission, Racial Disparities in Digital Surveillance