Starting with the top-of-the-line Core i9-12900K CPU, we can finally show you how Intel’s new 12th generation Alder Lake processor architecture performs. The chip is priced at $650, which means Intel has positioned their new flagship desktop CPU squarely between the Ryzen 9 5900X and 5950X, which will currently set you back $520 and $750, respectively.

Today is all about real-world performance as we had already covered the 12900K’s specifications, for all that goodness check out our Alder Lake preview here.

The Core i9-12900K is an interesting beast, packing 8 performance cores along with 8 efficient cores for a total of 24 threads, which seems like a strange number and that’s because those efficient or “E-cores” don’t support Hyper-Threading.

The P-Cores clock up to 5.2 GHz with the E-Cores limited to 3.9 GHz. There’s 30 MB of L3 cache, a total of 14MB of L2 cache, 16 PCIe 5.0 lanes from the CPU and four PCIe 4.0 lanes. Another new feature is DDR5 memory support, though Alder Lake also supports DDR4. You’ll have to pick which memory technology you want to pair your 12th-gen processor with though since you cannot mix the two.

| Intel Core i9 12900K | Intel Core i7 12700K | Intel Core i5 12600K | Intel Core i9 11900K | Intel Core i7 11700K | |

|---|---|---|---|---|---|

| MSRP $ | $650 | $450 | $320 | $540 | $400 |

| Release Date | November 2021 | March 2021 | |||

| Cores / Threads | 16 / 24 | 12 / 20 | 10 / 16 | 8 / 16 | |

| Base Frequency | 2.4 / 3.4 GHz | 2.7 / 3.6 GHz | 2.8 / 3.7 GHz | 3.5 GHz | 3.6 GHz |

| Max Turbo | 3.9 / 5.2 GHz | 3.8 / 5.0 GHz | 3.6 / 4.9 GHz | 5.3 GHz | 5.0 GHz |

| L3 Cache | 30 MB | 25 MB | 20 MB | 20 MB | 16 MB |

| Memory | DDR5-4800 / DDR4-3200 | DDR4-3200 | |||

| Socket | LGA 1700 | LGA 1200 | |||

The stock memory support includes either DDR4-3200, or DDR5-4800 and today we’ll be testing both, though we won’t bother with DDR5-4800 and instead to maximize what this new memory technology really offers, we have equipped our Alder Lake CPU with G.Skill’s Trident Z5 DDR5-6000 CL36 memory.

As for the motherboards, we’re using the MSI Z690 Unify for DDR5 testing and the MSI Z690 Tomahawk WiFi DDR4 for our DDR4 test system. Both motherboards work exceptionally well and we appreciate the fact that MSI has nailed their BIOS out of the gate. All application and gaming data was collected using the Radeon RX 6900 XT graphics card.

Due to the hybrid core design of Alder Lake, the 12900K along with most of the 12th-gen processors require Windows 11 and its improved thread scheduler for optimal performance. Therefore we’ve tested using a fresh install of Windows 11 and have updated all other benchmark data for Ryzen and other CPUs running Microsoft’s latest OS.

The Ryzen test system is running the Asus ROG Crosshair VIII Dark Hero motherboard with the latest BIOS update, and of course, all the latest Windows updates and drivers. It’s been a mammoth task getting all this data done in time for the Alder Lake launch, and yes, there is more to come as we’re working on reviews for the Core i7-12700KF, followed by the Core i5-12600K review, and much more.

Application Benchmarks

We’ll start with Cinebench, and right off the bat we can tell you these results are very impressive. The 12900K manages to make the Ryzen 9 5950X look slow, boosting multi-core performance by 13%.

We’re also looking at a 76% performance improvement from the 11900K (!) and that thing was released just 9 months ago for $550, just $100 less than the 12900K.

We did strongly recommend to avoid the 11900K like the plague, and seeing these results just confirms our initial stance.

A 76% increase is a massive generational leap and yet Intel’s charging less than a 20% premium, so already the 12900K is looking solid.

It’s interesting to note that DDR5 did nothing to improve the performance of the 12900K in this test, but that’s not terribly surprising as we know Cinebench isn’t a particularly memory sensitive test.

For this test, Cinebench R23 uses the P-cores and as you can see these big cores are fast, really fast. When compared to the Core i9-11900K we’re looking at an 18% increase in single core performance and a 23% increase over AMD’s Zen 3 architecture.

7-Zip results are somewhat strange, but we did go back and double check them just to be sure. Here we’re looking at a massive difference in performance for the 12900K using DDR4 and DDR5 memory in the 7-Zip compression test. Using DDR4-3200 memory, the 12900K was comparable to the 10900K, making it quite a bit slower than the 12 and 16-core Ryzen processors.

However, using DDR5-6000 memory boosted performance by a whopping 50%, allowing the 12900K to jump ahead of the 5950X which is quite incredible.

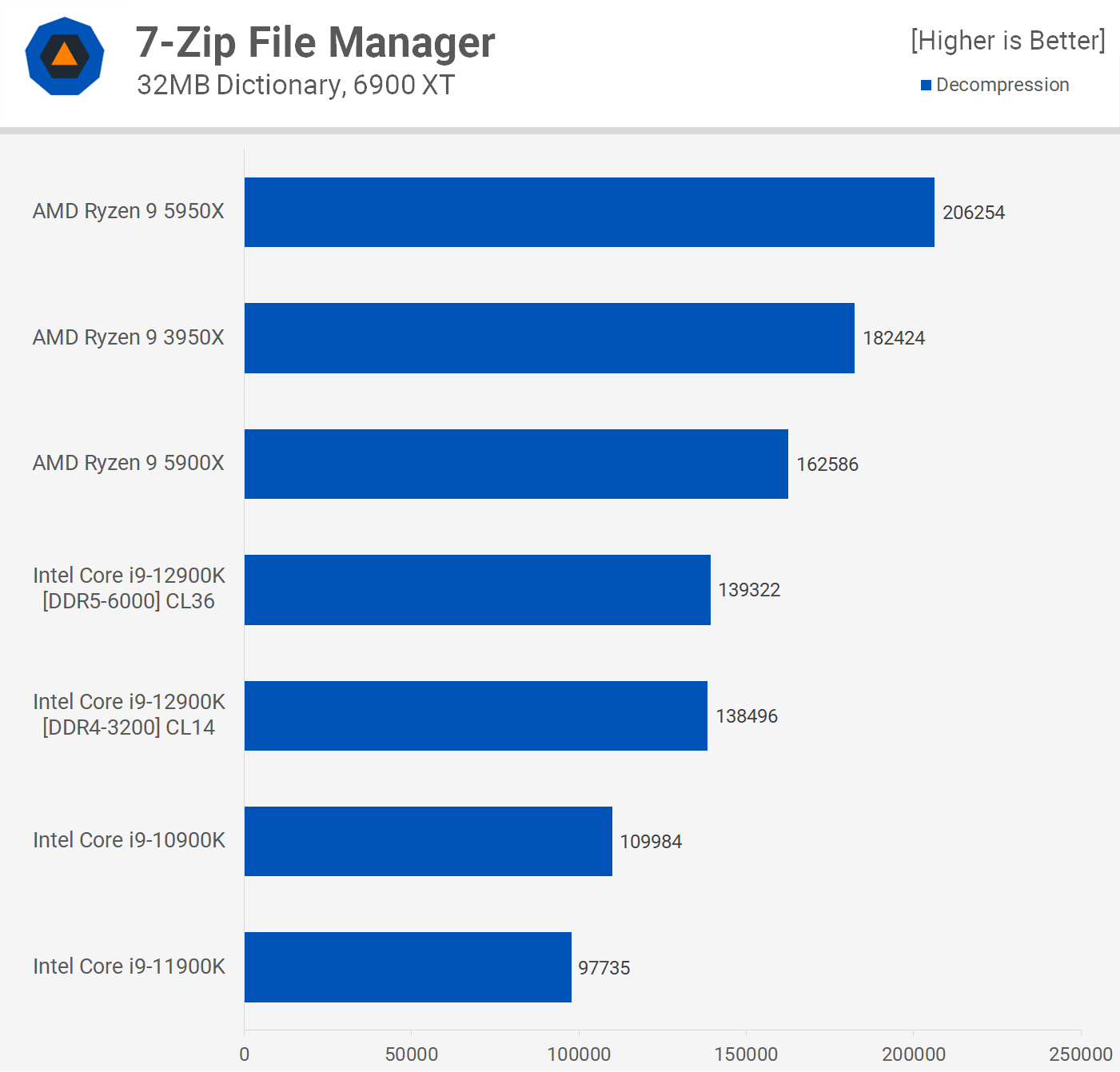

We’re not seeing the same improvement for decompression performance and here the 12900K is quite average, lagging miles behind AMD despite offering a little over 40% more performance when compared to the 11th generation.

Moving on to the Corona benchmark, we see that the 12900K is slightly slower than the 5950X taking 8 to 12% longer to complete the render, depending on the memory used, with DDR5-6000 improving performance by 4%. Then when compared to the 5900X the 12900K reduced render time by at least 8%, so here it does slot in well between the two Ryzen CPUs which is where it’s MSRP places it.

Performance in Adobe Premiere Pro 2021 is quite strong, roughly matching the 5950X when paired with DDR4 memory. Then we’re looking at a small 5% performance boost with DDR5 memory and while that’s enough to place the 12900K ahead of the 5950X, it’s not nearly enough to justify the current price premium DDR5 memory commands.

That strong single-core performance is on full display in Photoshop 2022 with the 12900K’s DDR4 configuration boosting performance by 14% over the Ryzen 9 5950X and 5900X.

Then with DDR5 memory the score is improved by a further 7%, making the 12900K the dominant desktop processor in this application.

The Adobe After Effects 2022 results are interesting. Here the 12900K offered an 11% performance increase over the 5950X when paired with DDR4 memory, while DDR5-6000 boosted performance by a further 7% making this configuration almost 20% faster than the 5950X.

Factorio is a new addition to our battery of benchmarks and this simulation game hasn’t been included with the rest of the games as we’re not measuring frames per second, but rather updates per second. This automated benchmark calculates the time it takes to run 1000 updates. This is a single thread test which apparently relies heavily on cache capacity.

As you can see the 12900K performs exceptionally well relative to the 5950X and in particular its predecessor, the 11900K. The upgrade to DDR5 memory boosted performance by a mere 3%, but the 12900K was 22% faster than the 5950X and 30% faster than the 11900K regardless.

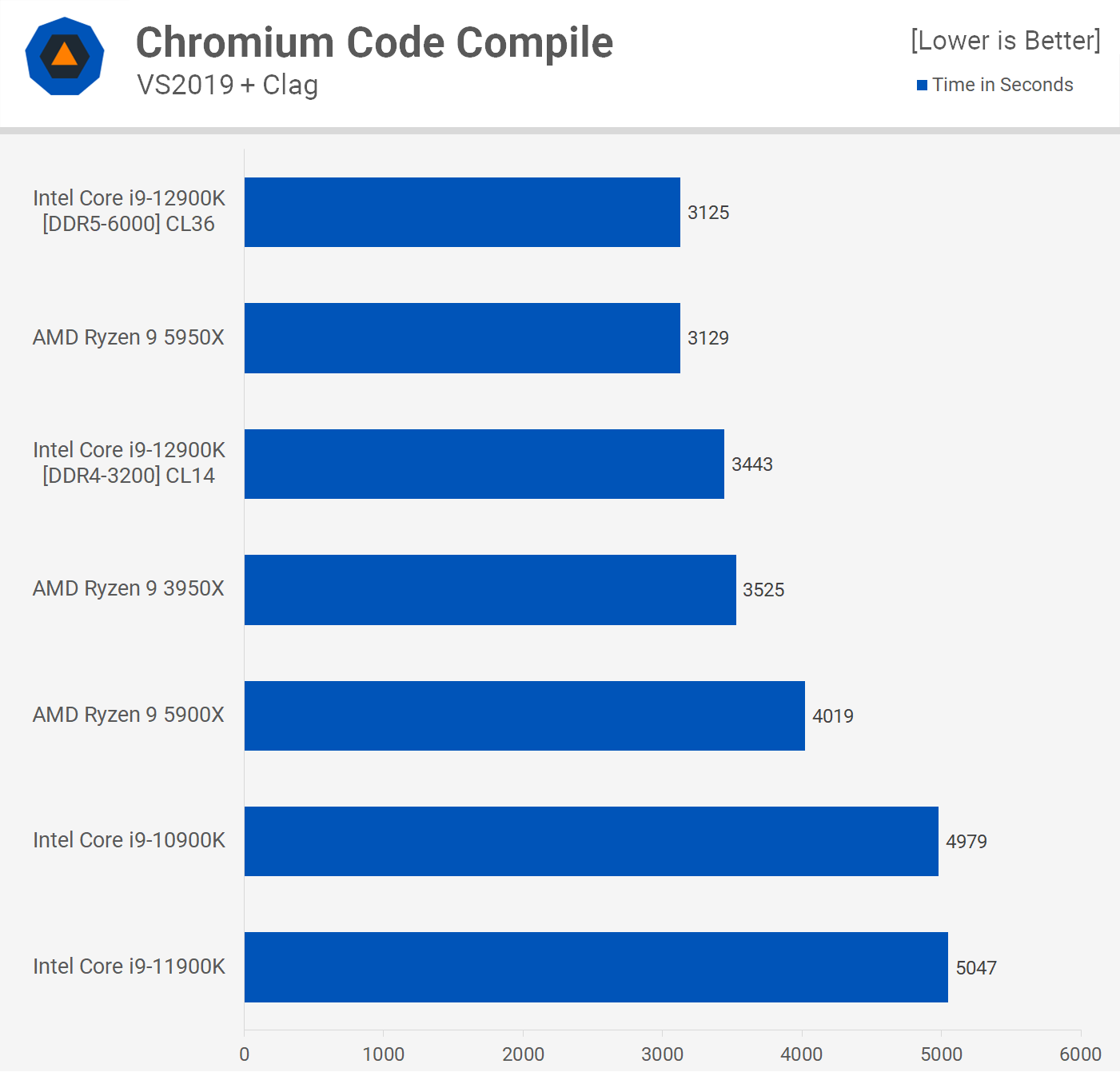

When it comes to code compilation performance, the 12900K is no slouch, delivering 5950X-like performance, at least when using uber expensive DDR5 memory. Using DDR4 memory the 12900K performance is more comparable to that of the 3950X as the faster DDR5 memory boosted performance by 10%. Overall a good result as the 12900K again finds itself positioned between the 5900X and 5950X.

The last application we’re going to look at is Blender, where the 12900K finds itself positioned between the 5900X and 5950X. That means it was over 50% faster than Intel’s previous generation flagship part, the 11900K.

Power Consumption

As great as application performance looks, it does come at the cost of enormous power usage. Whereas the 5950X saw total system consumption reach 221 watts with a bit faster performance, the 12900K peaked at over 350 watts. That’s a 60% increase in total system power usage which is an astronomical difference.

Power usage with either DDR4 or DDR5 memory was the same.

Finally, for those wondering how the 5950X consumed less power than the 5900X, this isn’t a new thing, it is to be expected and it’s due to the higher silicon quality of the 16-core part.

For those wondering about clock speeds, here’s a look at the 12900K under all-core load in Cinebench R23. As you can see the P-cores clocked to roughly 4.9 GHz at 4888 MHz with the E-cores at 3.7 GHz, so both are close to the maximum clock frequency as we’re not running with any enforced power limits which is now the default behavior.

Then for single core load the 12900K is clocking to 5.1 GHz for the P-cores and 3.9 GHz for the E-cores in this workload.

As for operating temperatures, the 12900K is a power hungry CPU for these kinds of all-core workloads and as such I was unable to avoid intermittent throttling with the Corsair iCUE H115i Elite Capellix installed as the CPU regularly ran at or very near 100C. These coolers do have mounting pressure issues with the 12900K, but I did my best to address that while also including ample thermal paste, but ultimately this kind of setup isn’t ideal for prolonged all core load.

Switching over to the MSI CoreLiquid S360 did help and the mounting pressure was more consistent across the IHS. This cooler did avoid throttling of any kind, but the core temperature still peaked at 96C, though temperatures for the most part hovered in the mid to low 80s.

Although we’ve only had time for limited testing it does appear as though 240mm AIO liquid coolers are out of the question as 12900K owners will require larger 360mm versions. That means for a decent cooler you’ll be paying over $100 with most of the Corsair and MSI models priced at or above $200. Meanwhile, the Ryzen 9 5950X can be kept cool with a half decent air-cooler.

Gaming Benchmarks

Enough serious business, now it’s time to play.

Starting with F1 2021, we run into some odd data that probably should be sorted by the 1% low results. When sorted by the average frame rate like what we see here, the Ryzen 9 5900X and 5950X are the faster gaming CPUs, breaking the 400 fps barrier.

The 12900K using DDR4 memory was comparable to the 10900K, at least when looking at the average frame rate. If we look at the 1% low we see that the 12900K is actually 28% faster and 15% faster than the Ryzen 9 processors. Interestingly, DDR5 slightly boosted the average frame rate, but it also slightly reduced the 1% low. So the strong 1% low result can’t be attributed to the use of DDR5 memory, given DDR4 was faster.

Next up we have Tom Clancy’s Rainbow Six Siege where the 12900K mixes it up with the 5950X and 5900X. It was slightly slower when paired with DDR4 memory, though we’re talking about a minor 2.5% difference to the 5900X. Then when compared to the 11900K, the 12900K was 22% faster on the average and a massive 43% faster for the 1% low.

Despite using dialed down quality settings, we see that Horizon Zero Dawn for the most part remains GPU limited. As a result, the 5900X, 5950X and 12900K all delivered comparable performance with ~190 fps on average. Needless to say, when GPU limited DDR5 has no chance to offer any extra performance.

It’s a similar story when testing Borderlands 3. These high-end CPUs are more than powerful enough to get the most out of the 6900 XT using what are medium-type quality settings. The 12900K was slightly better when it came to 1% low performance, and again this isn’t due to the adoption of DDR5 memory as we see much the same when using DDR4.

Moving on to Watch Dogs: Legion we have some eye-catching numbers. This is the first example where DDR5 offers a noteworthy performance uplift, boosting the average frame rate from 142 fps to 164 fps, a substantial 15% performance boost.

The 12900K was comparable to the 5900X and 5950X using DDR4 memory, but 15% faster using DDR5, if only DDR5 were just 15% more expensive.

We’re back to GPU limited results in the new Marvel’s Guardians of the Galaxy. For those wondering why I’d include such a title in our suite of game benchmarks, that’s because this accurately represents how most games play. That is to say, they’re entirely GPU limited when using high-end CPUs, despite the fact that we have dialed down quality settings with a 6900 XT at 1080p.

Shadow of the Tomb Raider can be very CPU demanding and here we’re not using the built-in benchmark but the village section. The 12900K performed very well, taking out the top spot on our graph, but quite oddly this was using DDR4 memory.

When paired with DDR5, the 12900K was a match for the 5950X when looking at the average frame rate, while the 1% low was 4% faster. But the point here is that we have an example where the 12900K was faster using DDR4 memory and by a substantial 9% margin.

Moving on to Hitman 3 we find the 12900K at the top of our graph again, this time using either DDR4 or DDR5 memory. We’re looking at Ryzen 9 5950X or 5900X-like performance, which is very good but AMD wasn’t blown out of the water in this instance.

In Age of Empires 4 we find the 12900K offering a substantial performance improvement, especially when it comes to 1% low performance. Interestingly, DDR4 offered the best performance in this title, boosting the average frame rate by 6%.

The 12900K was 20% faster than the 11900K and 25% faster than AMD’s 5950X and 5900X. So some seriously impressive results there for the new 12th gen processor.

Despite being a very CPU intensive title, Cyberpunk 2077 remains GPU limited in our testing, even with slightly dialed down visual quality settings. There’s not much to learn from these results, though it was interesting to again see DDR5 slightly down on DDR4 by a few frames.

Power Consumption in Gaming

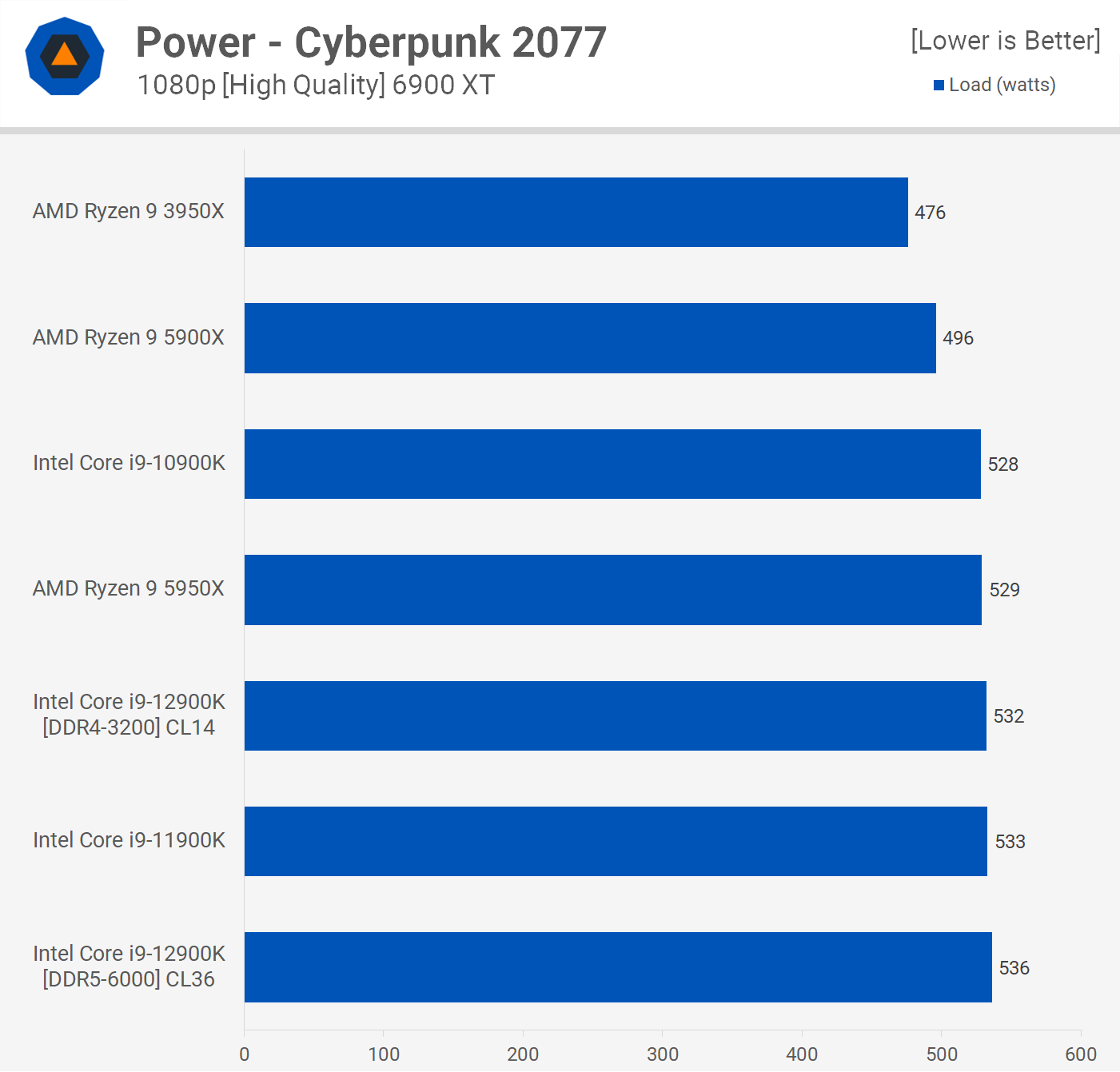

When we checked out Blender before we saw how extreme the power requirements are for the Core i9-12900K relative to parts like the Ryzen 9 5950X. However, while that data is relevant and accurate for high core count workloads like Blender, it’s not accurate when looking at gaming.

Most games don’t fully utilize 8-core processors, let alone 16-core processors and while some like Cyberpunk 2077 can spread the load quite evenly across a wide range of cores, they’re never going to be fully or even heavily utilized by even today’s most demanding games.

That being the case, power usage isn’t what you might expect and as you can see here the 10900K, 11900K, 12900K and Ryzen 9 5950X are all comparable. Even the 5900X, which uses less power when gaming, isn’t noticeably better in this regard, lowering total system usage by just 7% when compared to the 12900K using DDR5. So for gamers the whole power consumption argument is a bit pointless, at least until games fully utilize these CPUs, at which point you’d want something faster anyway.

10 Game Average

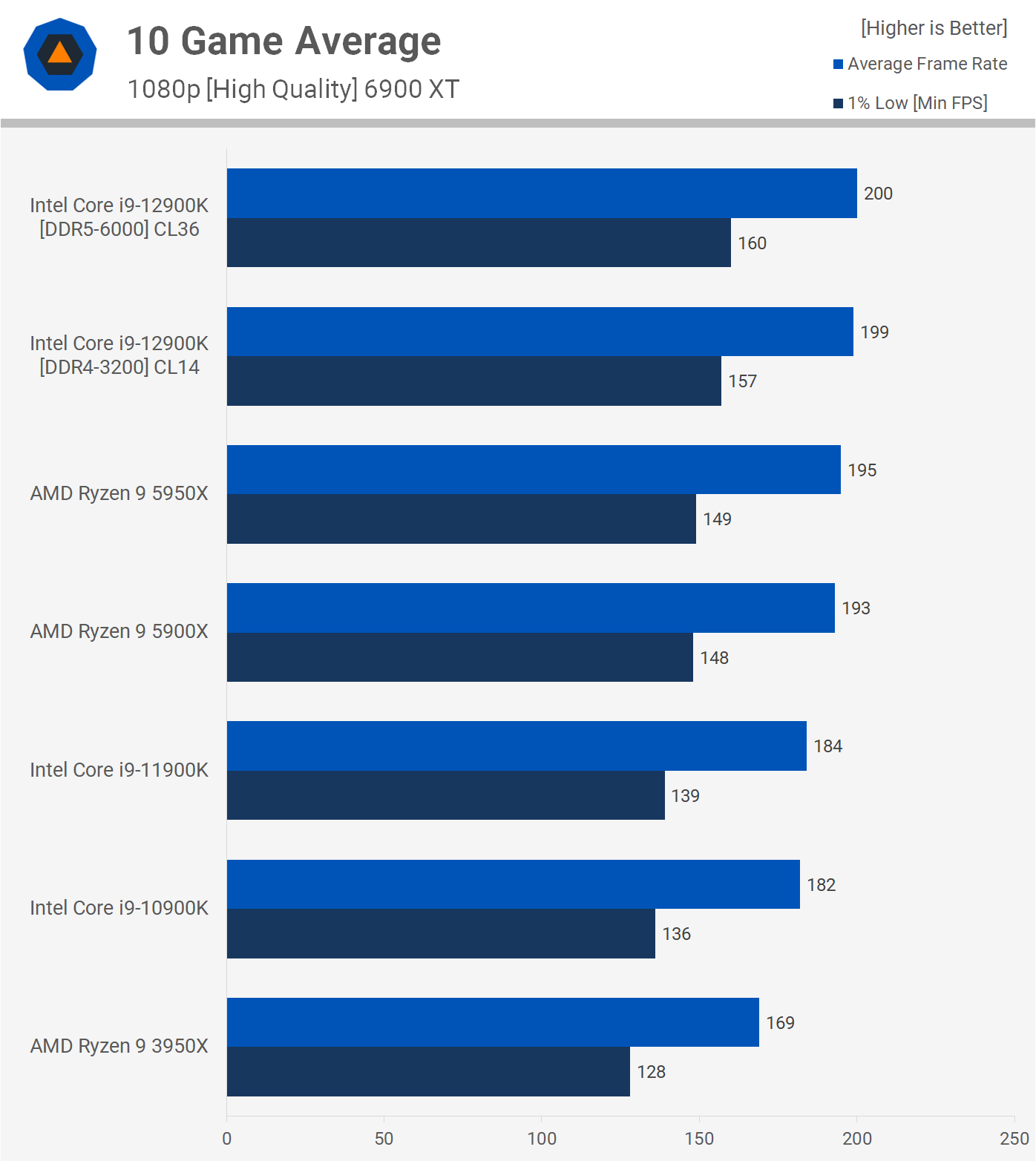

Intel seems right to claim the world’s best gaming performance once again, even if it’s by a small 2.5% margin on average if we use the collected data so far. If we focus on the 1% low data, then the Core i9-12900K with DDR5 memory was 7% faster than the 5950X, which is a decent performance gain.

Speaking of DDR5 memory, we’re looking at a minor 2% increase over DDR4 performance, seen when looking at the 1% lows, and we’re using extreme DDR5-6000 CL36 memory that will probably cost more than most Z690 motherboards.

Truth be told, there’s no difference in gaming performance between most of these high-end CPUs as you’ll almost end up GPU limited in today’s games, even with an RTX 3090 or 6900 XT at 1080p with dialed-down quality settings.

Windows 10 Performance

Before wrapping up this review, we wanted to take a quick look at Windows 10 performance to see if there’s any difference to using Windows 11 with the Core i9-12900K and DDR5-6000 memory. Starting with the Cinebench R23 multi-core test, we see that performance drops by 5% under Windows 10, not a huge decline but it is noteworthy.

As you can see by the single core data that the drop in performance seen for the multi-core test is a result for the Windows scheduler which wasn’t prioritizing the P-cores as well. For the single core test we’re just using the P-Cores so performance remains much the same.

Interestingly, 7-zip compression and decompression performance goes unchanged when using Windows 10. We expected to see a small decline, but that wasn’t the case.

The DDR5 configuration was slower than DDR4 when tested with Windows 11 in Shadow of the Tomb Raider, and that’s interesting because here we’re seeing better performance under Windows 10 and this result is comparable to Windows 11 using DDR4. It’s a substantial difference as well, the 1% low is 10% greater using Windows 10, for example.

This made no sense, so we went back to Windows 11 for a re-test but the data was all accurate. So for whatever reason the 12900K is performing better in this CPU demanding title under Windows 10.

It seems performance is a bit all over the place when comparing Alder Lake on Windows 11 vs. Windows 10 — this might deserve a detailed 30-40 game benchmark comparing the two in the near future. But for now, we’ve also got some Rainbow Six Siege benchmarks which suggest stronger performance using Windows 11, which is what Intel recommends. In this test, Windows 11 boosted performance by 6% for the average frame rate and 9% on the 1% low.

Is Intel Back? What We Learned.

That’s all the Core i9-12900K tests we have run so far, and we can draw some pretty solid conclusions based on them. Of course, there are many more tests we’d like to conduct and no doubt will in the future, but we didn’t want to bog this review down with stuff like memory scaling, IPC testing, tinkering with cores, and so on. We’ve also skipped overclocking for now as it’s extremely underwhelming and this CPU is power hungry and hot enough as it is.

Do expect us to cover these and more topics soon, but for now let’s focus on the data we have at hand to determine if the Core i9-12900K is worth buying, and if it is, who should buy it?

DDR4 vs DDR5

First things first, DDR4 vs DDR5. This will help simplify the discussion. DDR5 offers very little extra performance over quality DDR4-3200 memory, and let’s remember we used DDR5-6000, which is about as good as it gets right now, and expensive, too.

For gaming, you’re looking at a few percent performance boost with the best example seen in Watch Dogs which saw up to a 20% improvement with DDR5, but this was an outlier in our testing, though it’s likely a good indication of how they will compare in the future. How relevant that is in the short term is hard to say, but probably not very.

But even if we pretend that in CPU limited scenarios DDR5 memory will regularly deliver up to 20% greater gaming performance, it’s still not worth the premium from a value standpoint. Right now you can purchase a quality DDR4-3200 memory kit like the one we used for $190, with more sensible CL16 versions going for just $100. DDR5 on the other hand appears to be starting at $280 for a 5200 CL40 kit, which is much slower than what we used for testing.

Meanwhile, Corsair’s Dominator Platinum DDR5-5200 CL38 memory is selling for $330, and that’s still slower than what we used. But if we compare that to DDR4-3200 CL16 memory, it means you’re paying over 3x more for at best 20% greater performance. However, the reality of the situation is about a ~2% performance improvement on average.

And even that could be seen as an exaggeration as we’re testing at 1080p, with dialed down quality settings with an 6900 XT. So if you were to use this extreme graphics card at 1440p, with the same quality settings, there’s almost no situation where DDR5 will offer any performance improvement, let alone 20%.

Frankly, the only people who should consider DDR5 are those who buy 6900 XTs or RTX 3090s and stick them on overkill $700 motherboards. For everyone else, DDR4 makes way more sense. That being the case, we’re going to recommend all prospective Alder Lake buyers go with DDR4 for now, and only consider DDR5 once it gets very close to DDR4 in terms of pricing.

Core i9 vs. Ryzen 5000

The Core i9-12900K costs $650, making it 13% cheaper than the Ryzen 9 5950X and 25% more than the 5900X. If we factor in the price of a motherboard, we find that Intel Z690 boards start at $200 for products such as the Gigabyte Z690 UD, followed by the $220 MSI Z690-A. Those are entry-level boards though. At minimum you’d want to spend closer to $300 on something like the Gigabyte Z690 Aero G for $280, or the Asus Prime Z690-A for $300 to pair it with your flagship Core i9 CPU.

On the AMD side, we know that the Asus TUF Gaming X570-Plus for $160 is an excellent motherboard and for those seeking a higher end option the MSI X570 Tomahawk WiFi is great at $260. There are cheaper B550 options, but we feel those investing in a 5900X or 5950X are better off going X570.

Based on this information, it looks like you’re only saving $20-$40 on the motherboard when going with AMD, which is not a big deal at the high-end.

Now, if you’re just gaming, the 12900K is great, but it’s not amazing as it does cost 25% more than the 5900X and we saw a 4% performance increase on average, in a suite of mostly CPU intensive gaming. So if you’re seeking high-end gaming performance without venturing beyond the point of diminishing returns, the 5900X may be a better deal or really even the 5800X or one of the lesser Alder Lakes (which we’ll review soon). But if you’re seeking ultimate gaming performance then sure, the Core i9-12900K is it.

Then for workstation-type CPU intensive applications, our original assessment of DDR5 memory stands, you’re better off with DDR4 for the most part. But if your workload does benefit from DDR5 and time is money, well then it’s a no-brainer, go with the newer and faster memory. For everyone else, DDR4 will make more sense.

All that Power

The elephant in the room is power consumption, and this is of particular concern for core- heavy workloads. Productivity performance was fairly similar between the Ryzen 9 5950X and Core i9-12900K, but the AMD chip consumes significantly less power for these workloads. That will be a trade off, and depending on your particular use case AMD might be faster anyway. We think the 16 performance cores of the 5950X that sip power relative to what we see from the 12900K is the better bet, but it will be workload dependent. The Ryzen 9 5950X is also much easier to keep cool given it’s sucking down around 130 watts less.

Still, the Core i9-12900K is a mighty impressive CPU and it certainly signals Intel’s back and once again competing with AMD in the high-end desktop PC segment.

Deal or No Deal?

So would we buy the Core i9-12900K? If we were in the process of piecing together a high-end gaming PC, we think we might. It’s not the obvious choice, but assuming motherboard pricing is competitive in your region, it’s without question a viable option.

If waiting is an option, we’d probably feel it necessary to wait and see what AMD’s V-Cache brings early next year. AMD’s updated platform later next year which will support DDR5 as well, but that’s likely a year away at this point.

Right now we could easily go either way, the Ryzen 9 5900X or the more modern Core i9-12900K, we don’t think you can go wrong with either option. This kind of tight competition is great and if one brand wants to guarantee the sale, they’ll have to get more aggressive on pricing.

That’s all we have to say on the Core i9-12900K for now. Tomorrow we’ll be checking out the Core i7-12700KF and then the Core i5-12600K, followed by an avalanche of Alder Lake benchmark content. Stay tuned for that.