NASA’s next great eye in the sky, the golden-mirrored James Webb Space Telescope, passed a key review this week, bringing it one step closer to launching in November and observing new parts of the cosmos for scientists here on Earth.

That’s good news for the United States’ space agency, which has spent the last several weeks trying to troubleshoot issues with its current window on the universe, the Hubble Space Telescope.

The storied telescope that has revolutionised our understanding of the cosmos for more than three decades is experiencing a technical glitch. According to NASA, the Hubble Space Telescope’s payload computer, which operates the spacecraft’s scientific instruments, went down suddenly on June 13.

During its more than 30 years in the sky, the Hubble Space Telescope has captured stunning images like this one of the Messier 106 galaxy [File: STScI/AURA, R Gendler via AP]

During its more than 30 years in the sky, the Hubble Space Telescope has captured stunning images like this one of the Messier 106 galaxy [File: STScI/AURA, R Gendler via AP]As a result, the instruments on board meant to snap pictures and collect data are not currently functioning. The agency’s best and brightest have been working diligently to get the ageing telescope back online and have run a barrage of tests but still can’t seem to figure out what went wrong.

“It’s just the difficulty of trying to fix something orbiting 400 miles [653 kilometres] over your head instead of in your laboratory,” Paul Hertz, the director of astrophysics for NASA, told Al Jazeera.

“If this computer were in the lab, it would be really quick to diagnose it,” he explained. “All we can do is send a command, see what data comes out of the computer, and then send that data down and try to analyse it.”

Hubble’s legacy

When Hubble launched on April 24, 1990, scientists were excited to peer into the vast expanse of space with a new set of “eyes”, but they had no idea how much one telescope would change our understanding of the universe.

The telescope has looked into the far reaches of space, spying the most distant galaxy ever observed — one that formed just 400 million years after the big bang.

This image taken with the Hubble Space Telescope shows a hot, star-popping galaxy that is farther than any previously detected, from a time when the universe was a mere 400 million years old [File: Space Telescope Science Institute via AP]

This image taken with the Hubble Space Telescope shows a hot, star-popping galaxy that is farther than any previously detected, from a time when the universe was a mere 400 million years old [File: Space Telescope Science Institute via AP]Hubble has also produced stunning galactic snapshots like the Hubble Ultra Deep Field.

Captured in one single photograph are hundreds of thousands of ancient galaxies that formed long before the Earth even existed — each galaxy a vast and thriving stellar hub, where hundreds of billions of stars were born, lived their lives, and died.

The light from these galaxies has taken billions of years to reach Hubble’s sensors, making it a time machine of sorts – one that takes us on a journey through time to see them as they were billions of years ago.

Hubble has also spied on our cosmic neighbours, discovering some of the moons around Pluto.

Its observations showed us that almost every galaxy has a supermassive back hole at its centre, and Hubble has also helped scientists create a vast three-dimensional map of an elusive, invisible form of matter that accounts for most of the matter in the universe.

Called dark matter, the enigmatic substance can’t be seen. Scientists only know it exists by measuring its effects on ordinary matter. Thanks to Hubble’s suite of scientific instruments, scientists were able to create a 3D map of dark matter.

What went wrong

Scientists have been planning for Hubble’s inevitable demise for quite some time. Over the past 31 years, the telescope has seen its fair share of turmoil.

Shortly after it launched, NASA discovered that something wasn’t quite right: Hubble’s primary mirror was flawed. Fortunately, the problem could be fixed, as the telescope is the only one in NASA’s history that was designed to be serviced by astronauts.

Astronauts Steven L Smith and John M Grunsfeld serviced the Hubble Space Telescope during a December 1999 mission [File: NASA/JSC via AP]

Astronauts Steven L Smith and John M Grunsfeld serviced the Hubble Space Telescope during a December 1999 mission [File: NASA/JSC via AP]Over its lifetime (and the course of the agency’s shuttle programme), groups of NASA astronauts have repaired and upgraded Hubble and its instruments five different times.

When the space shuttle retired in 2011, it meant that Hubble would be on its own. If the telescope were in trouble, ground controllers would need to troubleshoot remotely.

So far that has proven to be effective. That is, until June 13.

Just after 4pm EDT (20:00 GMT), an issue with the observatory’s payload computer popped up, putting the telescope and its scientific instruments into safe mode.

Hubble has two payload computers on board — the main computer and a backup for redundancy. These computers, called a NASA Standard Spacecraft Computer-1 (or NSSC-1), were installed during one of the telescope’s servicing missions in 2009; however, they were built in the 1980s.

They’re part of the Science Instrument Command and Data Handling (SI C&DH) unit, a module on the Hubble Space Telescope that communicates with the telescope’s science instruments and formats data for transmission to the ground. It also contains four memory modules (one primary and three backups).

The current unit is a replacement that was installed by astronauts on shuttle mission STS-125 in May 2009 after the original unit failed in 2008.

When the main computer went down in June, NASA tried to activate its backup, but both computers are experiencing the same glitch, which suggests the real issue is in another part of the telescope.

Currently, the team is looking at the various components of the SI C&DH, including the power regulator and the data formatting unit. If one of those pieces is the problem, then engineers may have to perform a more complicated series of commands to switch to backups of those parts.

This image made by the NASA/ESA Hubble Space Telescope shows M66, the largest of the Leo Triplet galaxies [File: NASA, ESA/Hubble Collaboration via AP]

This image made by the NASA/ESA Hubble Space Telescope shows M66, the largest of the Leo Triplet galaxies [File: NASA, ESA/Hubble Collaboration via AP]NASA says it’s going to take some time to sort out the issue and switch over to the backup systems if necessary. That’s because turning on those backups is a riskier manoeuvre than anything the team has tried so far.

The operations team will need several days to see how the backup computer performs before it can resume normal operations. The backup hasn’t been used since its installation in 2009, but according to NASA, it was “thoroughly tested on the ground prior to installation on the spacecraft”.

Part of the trouble with Hubble is that the observatory was designed to be serviced directly. Without a space shuttle, there’s just no way to do so.

“The biggest difference between past issues and this one is there’s no way to replace parts now,” John Grunsfeld, a former NASA astronaut, told Al Jazeera.

But, he added, “The team working on Hubble are masters of engineering. I”m confident they will succeed.”

Looking to the future

The James Webb Space Telescope, scheduled to launch in November, is expected to expand upon Hubble’s legacy. The massive telescope, essentially a giant piece of space origami, will unfold its shiny golden mirrors and peer even further into the universe than Hubble ever could. Its infrared sensors will let scientists study stellar nurseries, the heart of galaxies and much more.

#Webb moves a big step closer to launch!!! ????????️????

Webb has just successfully passed its “Final Mission Analysis Review”, moving it closer to seeing farther!

Find out more: ????????????https://t.co/NiVRXHFQ0G #WebbSeesFarther #WebbFliesAriane #ExploreFarther @esa @nasa @csa_asc pic.twitter.com/pOrnlqfI7J

— ESA Webb Telescope (@ESA_Webb) July 1, 2021

Hubble has shown us that nearly all galaxies have supermassive black holes at their centres, the brightest of which we call quasars. These incredibly bright objects can tell us a lot about galaxy evolution, as the jets and wind produced by a quasar help to shape its host galaxy.

Previous observations have shown that there is a correlation between the masses of supermassive black holes and the masses of their galaxies, meaning that quasars could help regulate star formation in their host galaxy.

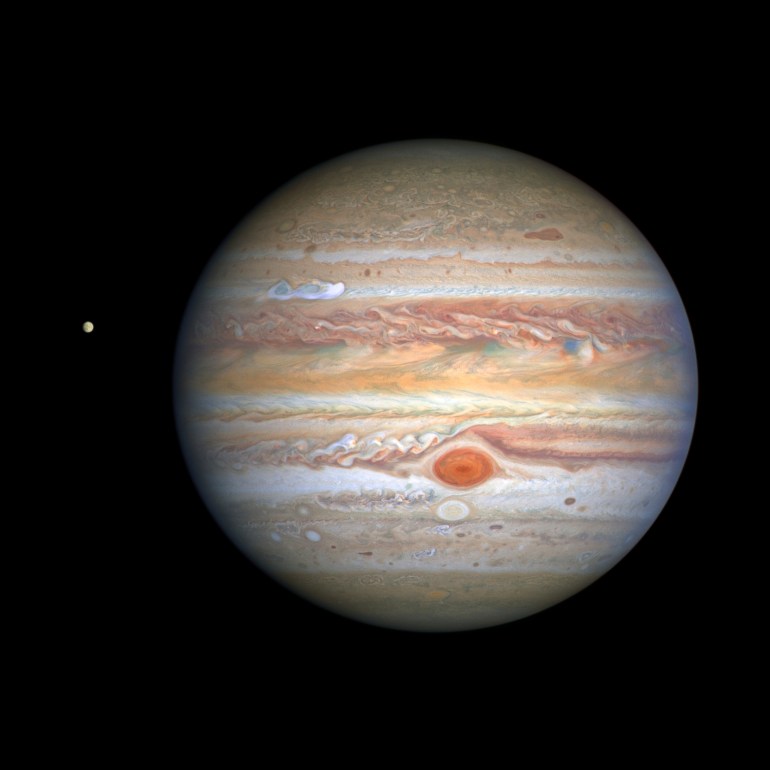

In August 2020, the Hubble Space Telescope captured this image of the planet Jupiter and one of its moons, Europa, at left, when the planet was 653 million kilometres (406 million miles) from Earth [File: NASA/ESA via AP]

In August 2020, the Hubble Space Telescope captured this image of the planet Jupiter and one of its moons, Europa, at left, when the planet was 653 million kilometres (406 million miles) from Earth [File: NASA/ESA via AP]“We see black holes at a time when the universe was only 800 million years old that are almost as massive as the biggest we see today, so they evolved extremely early,” Chris Willott of the Canadian Space Agency told Al Jazeera.

“By studying their galaxies, we can see what the impact of such extreme black holes is on the early formation of stars in these galaxies.”

Through Hubble’s eyes, scientists cannot detect individual stars in the galaxies with these ultra-bright quasars, but with Webb, scientists hope they will be able to see not only individual stars, but also the gas from which these stars form.

That means the Webb telescope has the potential to truly revolutionise our understanding of galaxy formation and evolution, the same way that Hubble did for our knowledge of the universe over the past three decades.