Photo by K. Kassens

United States Army John F. Kennedy Special Warfare Center and School

Whether it is a Fortune 500 company or an elite military unit, good or bad, every organization has some type of systemic process to recruit, assess, select, and train its personnel. Although these processes vary widely in their design and implementation, all organizations ultimately have the same goal: field the force with the right people and accomplish the organizational mission. During the summer of 2020, SWCS embarked on an ambitious initiative to holistically overhaul its training pipelines, paying particular attention to information management and the inclusion of data analytics in order to improve overall efficiency of assessing, selecting and training ARSOF. In the midst of this overhaul, a simple, yet highly relevant question was posited: “Why?” Why do we do it? What does Assessment and Selection accomplish that other job search methods cannot? The purpose of this article is to address this question, to reflect within the ARSOF community on why this process is so important, and to demystify a process that to others may seem like some sort of obscure ritual or rite of passage.

Photo by K. Kassens

United States Army John F. Kennedy Special Warfare Center and School

The Art of Risk Management

Talent acquisition is a constant balance between the need to fill the force with exceptionally qualified individuals and the need to ensure the force is adequately manned to serve the nation. This sets up what appears to be a direct tradeoff between maintaining quality (or standards) versus achieving sufficient quantity. We cannot and do not accept this notion. As the Special Operations Forces (SOF) maxim states, SOF cannot be mass produced; each individual is hand-picked and carefully trained for their job. Further, Special Operations leadership cannot risk leaving the nation unable to respond with SOF capabilities. The stakes are simply too high to accept risk in sacrificing quality or quantity. The goal, then, is to cast as wide a net as possible in recruitment, then enabling the risk management process to unfold from there.

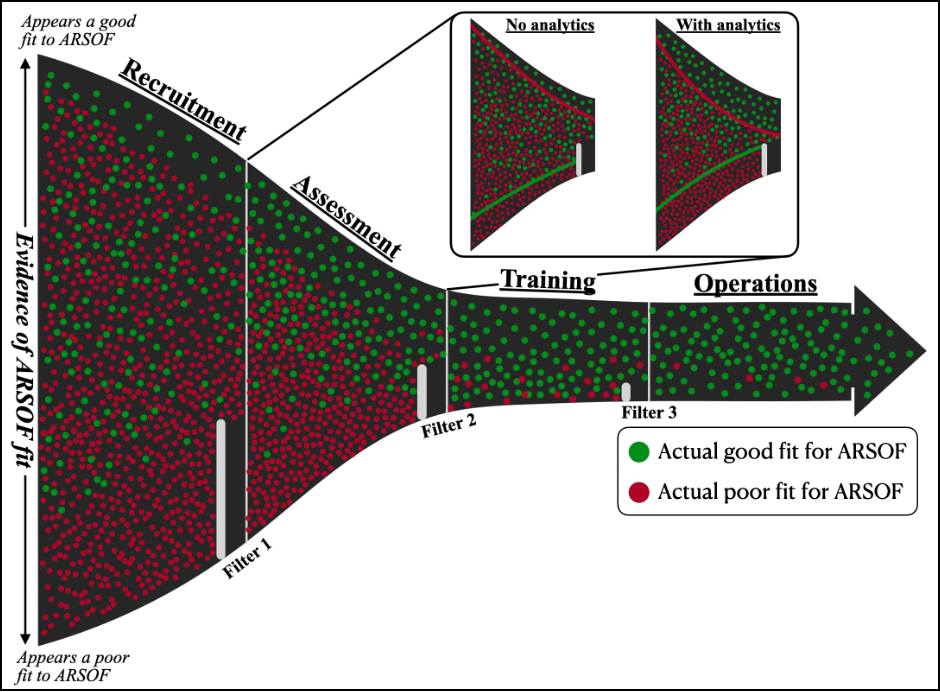

Figure 1 illustrates how to conceptualize the ARSOF talent acquisitions process. It includes four phases: Recruitment, Assessment, Training, and Operations. At recruitment, the talent population is random and at low probability of seeking and finding the right person for the job. As the process progresses, the population moves through a series of filters that serve as key decision points necking down the talent pool at each phase, increasing the probability of finding the right person for the job. The “input”, or recruitment, side (far left) includes a pool of potential recruits, some of whom are truly a good fit for ARSOF (denoted with green dots) while others are a poor fit (denoted with red dots). A “good fit” in this case means someone who will perform at or above the unchanging operational standards of exceptional ethical and moral judgement, and with high physical, psychological, and cognitive fitness throughout their career. By filter three, there is little to no possibility that the ARSOF talent acquisition process is vulnerable to random chance. Nearly every individual is a “good fit” for ARSOF.

Photo by Jason Johnston (Photo taken from DVIDS)

Importantly, we cannot actually know this truth about any individual in advance, we can only infer it through process. Although a soldier could look good on paper during recruitment, there is no way to inherently know from the outset (at recruitment) whether someone is a good or poor fit. This requires the organization to estimate “goodness of fit” based on collected evidence. Depending on the amount and type of evidence, a poor fit can look a lot like a good one, so the goal is to separate the two populations as much as possible. Each of the three major filters during talent acquisition is defined on the collection and processing of evidence, designed according to each phase to cut as many of the poor fit cases as possible while having minimal impact on the good fit population. In statistics, this is referred to as precision (in our case, rejecting only poor fit cases without impacting good fit cases) and recall (finding as many of those poor fit cases as possible). Ultimately, the details of the filter design — both with respect to evidence collected and analytics performed — reflect the artistry of risk management.

The process starts with recruitment, where the goal is to have a blunt filter to remove as many clearly poor fit cases as possible with effectively no impact on the pool of potential good fit candidates; that is, aim for high precision, but with an acceptable level of sub-optimal recall. This filter has to be balanced by reality: what is readily available in routine service records and what recruiters can realistically accomplish with their resources across an array of non-standardized recruitment locations around the globe. Most of this filter is practical in nature, identifying those potential recruits who are at least minimally physically fit, have promotion potential based on rank and time in grade, etc… The available evidence at this point is not particularly effective at sorting the two populations, but it does allow SWCS to rule out a lot of definite poor fit candidates.

At A&S, SWCS standardizes the assessment and conducts targeted examinations to focus on those qualities that do a great — though not perfect — job at distinguishing between a good and poor fit. Moreover, this can be done at relatively low cost in both time to the candidates and resources to the organization. Thus, A&S becomes the primary phase to sort good fit from poor fit after the more pragmatic filter of recruitment is applied. This much tighter filter at A&S results in a population that is generally of very good fit with only a few missed cases of poor fit making it through. Unfortunately, this comes at the cost of some good fit cases, though there is always a concerted effort made to limit the impact on this population. In the future, as data collection and analytics improve, SWCS will be able to better differentiate the poor fit from good fit cases, allowing better rejection of poor cases while impacting fewer of the good. The inset in Figure 1 illustrates how analytics can both lower the ceiling for poor cases and raise the floor for good cases. This results in better distinction between the two populations and a smaller homogenous region in the center.

When the soldiers get to training, most of the population will be a good fit, as A&S has filtered out the poor fit candidates. The filter points in this phase are usually relegated to significant and uncorrectable failures in academics, behavioral issues that were previously unobserved, or unforeseeable circumstances such as major injury. This phase helps remove the last few poor fit candidates that are still functionally differentiable from good fit candidates.

The last phase, operations, focuses on the operational force, where the goal is to assume minimal risk – more specifically, a soldier failing standards and/or harming the mission and/or nation. At this point, it is expected that ARSOF personnel have the necessary knowledge, skills, and attributes to perform their jobs and represent the enterprise. Unfortunately, no effort to predict long-term human behavior is perfect. Some poor fit candidates will make it through the entire process regardless of the A&S system used, translating to a certain level of risk assumed by the respective organization and its leadership. However, this level of risk is acceptable and unquestionably better than the alternative of not utilizing an A&S course at all.

Photo by K. Kassens (Photo taken from DVIDS)

Photo by Maj. Stuart E. Gallagher