Bob Sutor, VP of IBM’s Quantum Ecosystem Development

IBM

Technology moves fast. Scientists are already developing the next generation of computing called quantum computing. Over the past year, I’ve had the opportunity to speak several times with Bob Sutor, VP of IBM’s Quantum Ecosystem Development, on what was happening in the field. While quantum computing isn’t a household term, Sutor shared that it isn’t new. Quantum computing’s roots extend back to the 1900s and quantum mechanics.

Quantum computing uses quantum mechanics concepts such as superposition, entanglement and interference. Yet, these terms are confusing for individuals not deeply rooted in physics. Rather than try to explain each of these, I suggest watching this video where WIRED challenged Dr. Talia Gershon, Director of Hybrid Cloud Infrastructure Research at IBM Research, to explain quantum computing to 5 different people. Gershon was previously IBM’s Senior Manager of Quantum Research. The video is brilliant.

Sutor also provided a simplified way of describing it when he said we can think of computing as being in two camps. Sutor referred to these camps as classical and quantum. The classical era uses what we have today, such as processors, servers on the internet, the mainframes, and high-performance computing. But why was quantum developed and why do we care about it?

Sutor described how certain problems simply couldn’t be solve with a classical computer. Similar to what Gershon said in the video, traditional computing can run out of capacity to solve the problem. In other cases, classical computing takes too long to solve the computation. Hence, IBM and others are working on a different type of computer that removes those constraints.

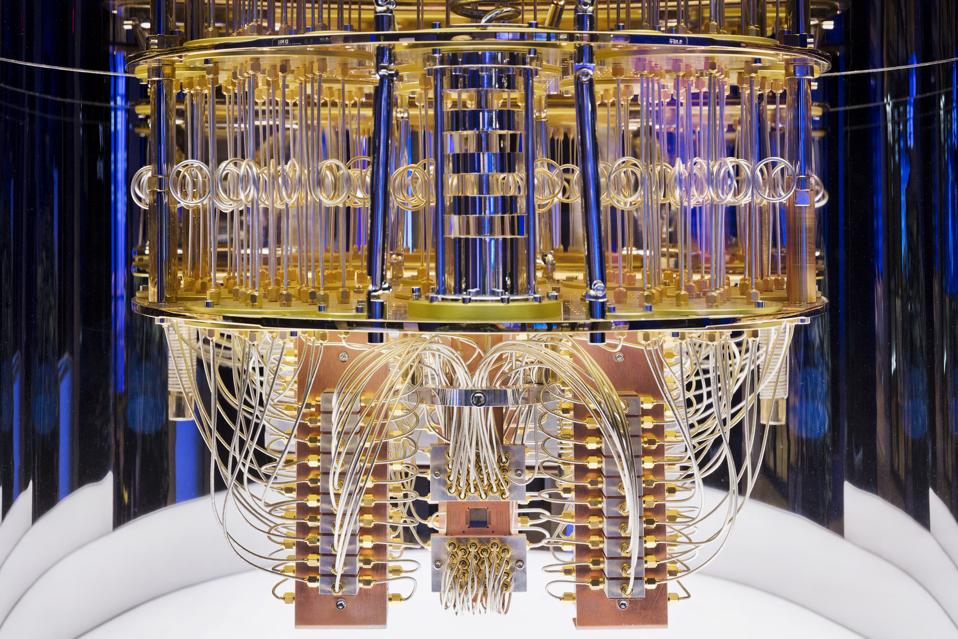

Image of the interior of IBM’s Quantum Computer

IBM

Why don’t we have it today?

The move to quantum requires constructing different hardware, software, physical enclosures and even a new programming model. A quantum computer uses qubits, which are quantum bits. It’s an extension of the idea of zeros or ones, but with quantum, we have more than a binary choice of a zero or a one. One way to describe this is a game of heads or tails. When a coin lands, it’s either heads or tails. However, while it is spinning before it lands, it is neither heads nor tails. It’s both. This same principle applies to a qubit in computing, which can be in a state of zero, one, or a superposition that represents both. This flexible state provides extra dimensions in which to compute.

If we add the concept of entanglement (intertwining qubits to make their behavior correlated), we can double the power of quantum computing every time we add another qubit. For example, the system can go from two to four to eight to 16. This exponential increase in computing makes it well suited to solve complex computing problems.

But there are challenges

In the ideal world, you’d create a qubit, add additional qubits, and apply operations (instruction sets) to the qubits to achieve the desired outcome. However, it’s not quite that simple. As Scientific Computer said, “Quantum computers are exceedingly difficult to engineer, build and program. As a result, they are crippled by errors in the form of noise, faults and loss of quantum coherence, which is crucial to their operation and yet falls apart before any nontrivial program has a chance to run to completion.”

Qubits are sensitive to heat and any outside interference. The qubits must be kept in a cold chamber because heat creates computing errors. For example, the IBM quantum computer sits in a chamber where the temperature is 0.015 Kelvin. As a comparison, outer space has an average temperature of 2.7 Kelvin.

Noise can cause some errors which affect the computation. Some of these errors might come from small manufacturing defects, while others may occur if the system applies too much energy to the qubit. The physical structure of computing has to remove extra noise from the real world. To do this, you have to perform what’s called mitigation to reduce it. IBM published a paper in Nature, explicitly talking about a smart solution that uses noise to help eliminate noise.

It’s about quality, not quantity

Like the processor wars of old, it’s easy to get caught up with the discussion of which vendors system has more qubits than another vendor. The question in quantum should not be how many qubits a computer supports, but what is the number of high-quality qubits. A good quantum system starts at the device level. Scientists and engineers build the qubits for a device, attempt to minimize the errors in each qubit, and optimize how it’s connected to other qubits. For example, your television isn’t going to have a clear picture if you had used poor quality HDMI cable. It’s the same with quantum. Each step is essential to ensure the highest quality.

Since quantum computing differs from classical computing, qubits require new metrics for measuring quality. Sutor said IBM uses a metric called Quantum Volume (QV) to measure a quantum computer’s power. The QV method quantifies the largest random circuit of equal width and depth that the system implements with high performance. Quantum computing systems with high-fidelity operations, high connectivity, large calibrated gate sets, and circuit-rewriting software toolchains should have higher quantum volumes. Taking the technical terms aside, this shifts the dialogue from merely stating the number of qubits to talking about the number of stable qubits (coherence) that can interact (connectivity) with each other as a system. Sutor said IBM has been able to double this power year over year since 2017.

Given the nascent state of the industry, it’s not surprising to hear that there are differing views on the best way to measure a quantum computers performance. While I can’t comment on the best way to do this, vendors must provide a framework for helping buyers understand the attributes of performance and the different styles of measuring them. Quantum Volume is starting to be used by others, such as Honeywell, in stating the performance of their systems.

Is quantum computing right around the corner?

Quantum computing is perceived as a technology that is very far away. And, indeed, quantum computing isn’t around the corner. However, tremendous progress occurs every six months. Just last week, IBM announced that by combining a series of new software and hardware techniques to improve overall performance, IBM had upgraded one of its 27-qubit client-deployed systems to achieve a Quantum Volume 64.

Real businesses are investing today

Companies, such as IBM, Google, Intel and others, are spending this time creating better systems and better software. However, this doesn’t happen if they’re working in a research vacuum. Real progress only happens when technology vendors work with clients to solve actual problems. IBM offers the Quantum Experience, but the IBM Q Network is the commercial version of the program where companies have access to IBM’s latest technologies and support for business strategy engagements.

For example, Daimler’s working with IBM to research how quantum algorithms for chemistry and materials science will support Daimler’s long-term goal of designing new batteries. IBM and Exxon are looking at how quantum computing can improve predictive environmental modeling, and help Exxon discover new materials for more efficient carbon capture.Meanwhile, in finance, JPMorgan Chase and IBM are researching methodologies for financial modeling and risk management.

Quantum advantage versus quantum supremacy

As you read more about quantum computing, you’ll inevitably run into the concept of “quantum supremacy,” which is the idea that quantum computers are powerful enough to complete calculations that classical supercomputers can’t perform at all. IBM’s Sutor talks about establishing a quantum advantage as being more significant. Quantum advantage is the point where quantum computers perform specific computing tasks more efficiently (hundreds to thousands of times faster) or at a lower cost than using classical computing alone. It’s not for every type of problem, but it will excel at specific things. He believes this is easily achievable within this decade.

Sutor noted that quantum computing is not just as simple as manufacturing qubit. He stated there’s also science, experimental physics and engineering to refine. For example, scientists must improve quantum circuits to achieve quantum advantage. Sutor expects that in three to five years, we will see early instances of quantum advantage. However, this vision of quantum advantage requires hardware, software, and algorithms systems, to all come together.

Advice to organizations

Quantum is exciting because it forces people to move beyond the old notions of how to accomplish various computing tasks. Putting aside the old allows breakthrough thinking, which is essential for companies to take their digital journey to get to the next level. Like any other IT project, you need a quantum computing champion to explore, experiment, and evangelized the technology within your organization.

Quantum computing isn’t something you can pick up in a weekend or at the grocery store. It’s an emerging technology that is arguably five or more years away from widespread commercial use. However, an organization should begin its quantum computing education today and start to define projects that can leverage cloud-resident quantum technology.

Organizations that do this have the opportunity to create breakthroughs in areas such as material science and artificial intelligence. Many are already on this educational journey: at the recent Global Summer School, 4000 people attended workshops and labs to learn about IBM’s open source Qiskit development platform.

Individuals can take advantage of the quantum wave as well.

The evolution of quantum computing also provides an opportunity for people to engage in learning new computing skills to enter a market at its inception. Since the fundamentals of quantum differ from classical computing, it doesn’t require previous knowledge or the need to relearn skills.

It’s an exciting time in computing. We have real problems, such as creating vaccines, that quantum computing can help us develop faster and better. Researchers and technology vendors are supplying training and free access to computing resources that cost billions of dollars to design. Anyone with the desire and aptitude to learn a new skill has the opportunity to participate in the next wave of computing jobs regardless of race, age, or other affiliations. The world is what you make it and individuals can use quantum computing to make it better.