(Bloomberg) — Nvidia Corp., whose powerful chips helped set the stage for the artificial intelligence boom, is now looking to address a major concern surrounding the technology: that AI bots will go rogue and cause harm.

The company is introducing software Tuesday that regulates AI systems based on large language models — the learning technique used by OpenAI’s ChatGPT and other popular bots. The tool, called NeMo Guardrails, can keep chatbots on topic and make them less likely to offer up restricted information. It also will prevent them from guessing wrongly or taking actions outside their purview, Nvidia said.

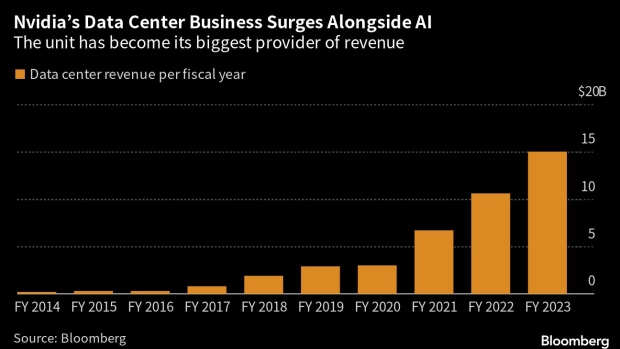

The flood of interest in ChatGPT, along with other systems that mine large data sets to generate automated answers, has the potential to change almost every industry. The trend is also poised to make a fortune for Nvidia, a pioneer of graphics cards that now gets most of its money from chips for data centers — the server farms needed to power AI. But for artificial intelligence to continue to flourish, users need to be able to trust the results that chatbots generate.

“Everyone is aware of the power of generative large language models,” said Jonathan Cohen, a vice president of applied research at Nvidia. “It’s important that they’re deployed in a way that’s safe and secure.”

Some of the largest companies in technology use Nvidia’s processors to handle AI work within data centers, and that’s helped the chipmaker weather a broader slump in the computer industry. In fact, its data center unit is now bigger than the whole company was as recently as 2020.

Nvidia is offering NeMo Guardrails as open-source software and will continue to update it. The Santa Clara, California-based company is also including it in a suite of programs that it provides to clients for a fee.

NeMo Guardrails will operate as a layer between the end user and the AI program. Using a mixture of Nvidia’s own large-language model and conventional software, the system will be able to recognize when the user is asking a factual question and check whether the bot can and should answer that query. It will determine whether the answer generated is based on facts and govern the manner in which that chatbot gives a reply.

For example, let’s say an employee asks a human-resources chatbot if the company provides support for workers who want to adopt a child. That would pass through NeMo Guardrails and return text containing the company’s related benefits. Asking the same bot how many employees have taken advantage of that benefit would trigger a refusal to comply, because the data is confidential.

If users asked the bot for nonpublic financial information on the company, they’d be told it was off topic. And to check whether the program really knows the answer and isn’t just guessing – a problem known as hallucination – the software asks the question multiple times in the background to ensure that the user isn’t getting a random but superficially plausible response. Similarly, the software might make sure that a bot stays measured in the way it reacts, even when users attempt to provoke it into replying in a way that’s inappropriate.

In a recent controversy, ChatGPT users described gaining access to forbidden information by asking the bot to pretend it was their dead grandmother.

Making Nvidia’s new tool free to access will let the community test it out and help make sure that it can’t be used for further abuse, Cohen said.

“Any time you open-source something, people can examine it and find a way to exploit it. That’s why we open-sourced it,” he said. “We want the community to look at it.”