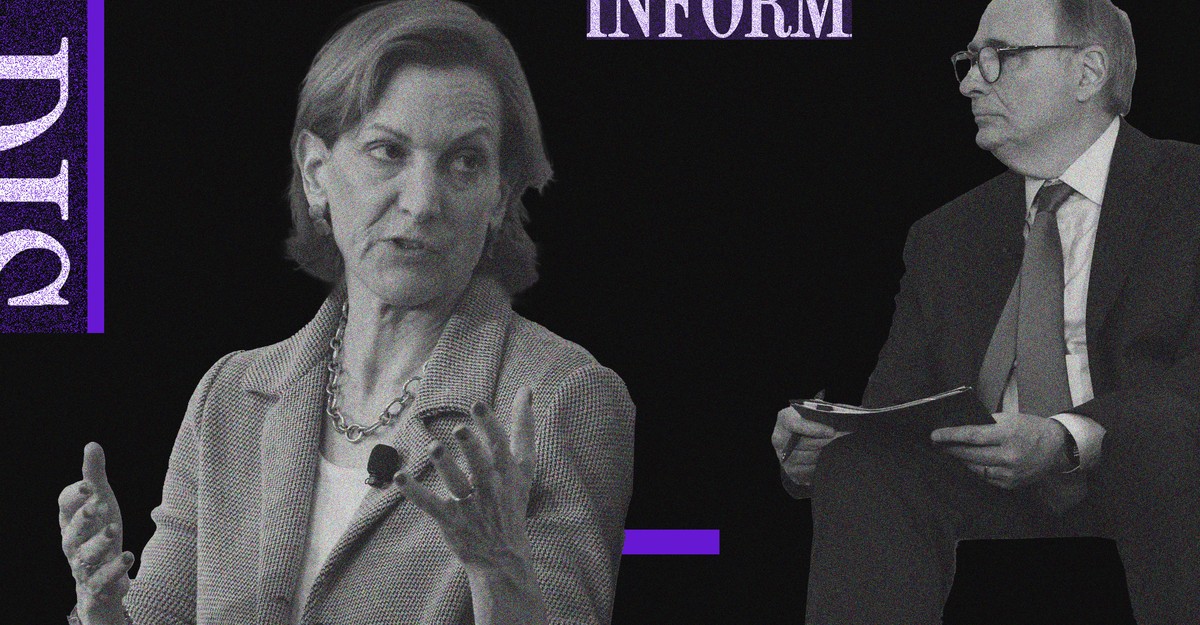

Atlantic staff writer Anne Applebaum, an expert on Eastern Europe, has long watched social media’s power with great concern. Yesterday, at Disinformation and the Erosion of Democracy, a conference hosted by The Atlantic and the University of Chicago’s Institute of Politics, she spoke with David Axelrod, the founding director of the Institute of Politics, about the dangers these platforms pose to democracy. They discussed Russia’s disinformation efforts, what makes some conspiracy theories so successful, how institutions can rebuild trust, and more. Their conversation has been lightly edited for clarity and concision.

David Axelrod: Anne, I’ve been reading all your wonderful pieces lately, scary exhortations at times, about where we are right now. You live in Poland, right across the border from Ukraine, and we’ll get to that. But you just wrote a piece called “Why We Should Read Hannah Arendt Now.” I raise that not just because she taught here and we’re very parochial, but because your first line of that very good piece was: “So much of what we imagine to be new is old; so many of the seemingly novel illnesses that afflict modern society are really just resurgent cancers, diagnosed and described long ago.” We heard Maria Ressa speak earlier about part of what is new, but I’d love you to sort it out. In the struggle between autocracy and democracy, what is what is old and what is new?

Anne Applebaum: When the founders of the United States of America were writing our Constitution, one of the things that they were worried about was demagogues, who might come to power by abusing the trust of the mob. That was more than two centuries ago. When they were having those discussions, most of what they were reading was about the Roman republic, which was a subject of widespread curiosity in colonial America. So what we’re talking about when we speak about autocrats and the appeal of autocrats is extremely old. It is maybe the oldest political idea in humanity.

Axelrod: It was addressed in “Federalist No. 1.”

Applebaum: “Federalist No. 1,” and Alexander Hamilton was reading about Julius Caesar. So many of these questions have been discussed and discussed over and over again. And it’s important to keep remembering that, because some of what is new is technology’s ability to draw out and amplify some of those human emotions and desires. What seems to me to be new is the way that we communicate and the ways in which that communication now amplifies, creates, and uses the desire for autocracy—or the fear and anger that lead people to demand autocracy. Nothing is new about the emotions. What is new is the ability of internet platforms to evoke them and amplify them.

Axelrod: What about the deployment of these technologies by autocrats, not just in their own countries, but as an offensive weapon? We’ve always had those efforts, but they’ve sort of been turbocharged now.

Applebaum: So in the 1980s, when the Soviet Union wanted to create a piece of disinformation, a conspiracy theory—and this is a true story—

Axelrod: How do we know it’s true?

Applebaum: Well, the story of how they did it is true. They wanted to seed the conspiracy theory that the CIA had created the AIDS epidemic. And they did it in a very specific way. An article appeared, I believe, in an Indian newspaper through a friendly journalist speculating that this might be true. Then another one in an Italian newspaper. Then they found another couple of papers that would print it, and sometimes those papers would then quote one another. At one point, they found an East German scientist who appeared in public and said he’d found some evidence that this was true. And they built the case for the idea that the CIA had created AIDS over several years. Now they can do exactly the same thing, except that it takes 10 minutes. You can have a network of fake or even semi-fake websites that will take articles that have been seeded or prepared in advance. They will then echo each other. People will then see, “Oh, look, there’s several sources repeating this story over and over again.” You can then create a botnet of trolls or even real people who you’ve organized to spread the story. And you can then move the story around the internet in 10 minutes, and you can give the impression that there’s a wave of conversation and discussion about something, even though it doesn’t exist and it’s not true. So what’s different is the scale and the speed.

Axelrod: Maria was discussing how this has been applied in the elections in the Philippines, but we experienced it with Russia—and others, but Russia primarily—interfering in our election. How new is that?

Applebaum: In a way, this is the dark side of globalization, namely that we all now live in the same information market. Whereas in the past the Russians or the Soviet Union would have had to try and get something going in the Indian market and then the Italian market and then somehow seed that into the American market, it’s now essentially one market. There’s an ease with which they can, first of all, study American politics and understand it in a way that wasn’t possible before, because they can use the same microtargeting and research tools that marketers use. They can tailor messages to different groups in specific ways, again, in the same way that marketers use. The people who are selling soap powder or washing machines—they also have ways of targeting particular audiences, changing the message whether you’re in Illinois or Florida or Wyoming, or whether you’re rich or poor. And the Russians took those tools and they used them to target Black Lives Matter activists in one part of the country and immigration activists in another part. Of course, it’s important to understand that what they did was no different from what American political campaigns do. The tools were available. And where those kinds of tools, in the past, wouldn’t have been available to outsiders or foreigners, now they are because everything’s available. They can reach into our markets and send whatever messages they want.

We’ve never tried the same in reverse. We know very little about the Russian internet, for example. I don’t think we spend a lot of time thinking about which groups in Russia would be interested in hearing our messages or the kind of language we could use to send them. But they spent many years actually studying us, and 2016 was the result of a long process.

Axelrod: Maria showed us a nuclear explosion twice, actually. That’s the thing about nuclear weapons. Once you use them, it’s hard to control. But this is a pretty cheap and efficient way to really throw a sort of a Stuxnet worm into the workings of democracies. Putin’s army may be being proved deficient right now, but they have a pretty efficient cyberarmy of a much smaller nature that doesn’t cost much to create a lot of havoc.

Applebaum: One of the oddities of the current war and one of the reasons why many people didn’t believe it would take place—despite the intelligence that the U.S. had gathered and was sharing with European colleagues—was that, up until now, the Russians had always been very cautious. Their tactic was to dismantle the enemy before you have to fight with the enemy. In other words, they seemed to want to avoid direct military conflict with the West because they believed they could disarm and undermine the West in other ways.

It wasn’t just through the use of the internet and Facebook. It was also through the funding of political parties in, really, almost every European country and democracies around the world. It was through the use of bribery but also just business contacts. They sought to create influence operations inside specific countries using mainstream businesspeople and mainstream journalists. I had a conversation with an Italian journalist a couple of days ago who wanted to talk about the Italian far right and Russian influence. I said, “Why don’t you talk about the Italian business community?” They were just as important in shaping Italian views that “We must be pragmatic, we must deal with Russia,” and so on. The same is true in Germany. So Russia had multiple ways of looking to influence conversation. Remember that their ultimate goal wasn’t just to win a popularity contest or a propaganda war. Their ultimate goal was to dismantle the European Union, to undermine NATO, and to persuade the United States to leave Europe. They had very clear strategic goals, and the information piece of it wasn’t PR; it was a part of those goals. It was an attempt to achieve them without actually fighting.

Axelrod: Yes. And in fact, it wasn’t to promote their own image; it was to destroy it.

Applebaum: This is the big difference between modern Russian propaganda and Soviet propaganda. There was no attempt—or very little—to sell Russia as an ideal society. There was no communist paradise that was being offered. Their goal instead was to undermine us and to convince Russians that Europe is degenerate, that America is falling apart and that there is nothing to admire or to seek in democracy. Putin’s main goal was to prevent Russians from wanting democracy, because he perceived his main political challenge as coming from the democracy movement and democracy activism in Russia that once or twice during his rule has seemed to have a lot of power and force. And so, first of all, convincing Russia that democracy is nothing. It’s all cynical. It’s all fake. It’s not true. And then, secondly, seeking to reach people inside democracies and convincing them of the same. And of course, because we have those strains of our own, because our own societies are full of people who are angry or cynical or disappointed with the nature of modern America or modern Europe, he found—it’s not as if he invented Marine Le Pen, the opposition leader in France, or he didn’t invent Donald Trump. He simply found them, and they were useful vectors. And he sought to amplify them. So, in that sense, it was an amplification project rather than creating something from scratch.

Axelrod: Nor did he create the fissures that were there. He mined them. He took advantage of them.

Applebaum: He did, and then many others copied him. I do think that the Russian actions in 2016 were important partly because they showed others how it could be done, and also because of the use of leaked material from the DNC. That was a tactic that I’d seen used before. So when you leak secret information, even if the information is completely anodyne‚ which most of that was—I think all of it was, in fact—the public is attracted to the idea that something secret has been revealed. And then you can use that to create conspiracy theories and hysteria. And I saw that done in Poland earlier on. I saw it done in other countries as well.

Axelrod: Well, and you saw it done to you.

Applebaum: Yes.

Axelrod: After your critical reporting on the invasion of Crimea, all of a sudden you became the object of attention.

Applebaum: Yes. So there was a brief and very strange moment when a very odd journalist, who was in fact probably a former KGB agent in the United States, began writing slanderous things about me. And this is actually how I learned about how this works, because one is always more interested in the slanderous things written about oneself. It sort of catches your attention. And then you start following it around the internet. Where is it coming from? This is exactly what you just described. You see it: Oh, look, it’s on Ron Paul’s website. Who knew the libertarians were interested in me? And then it appears in another place, and you begin to see how they echo one another. And in my case, the sort of height of it, and this is about 2015, was when Julian Assange tweeted something about me being a paid agent of—I can’t remember whether it was the Polish government or the CIA, but something like that. Why is Julian Assange tweeting that about me?

Axelrod: To his 4 million followers.

So obviously 2016 was 2016, but you became very interested in this early on, and, in fact, you and a colleague started a think tank.

Applebaum: Yes, I became interested in this in 2013 and especially 2014. We’re all so proud of ourselves now that we’re resisting Russian propaganda and the Russian description of the war, but actually, in 2014, Russian propaganda was quite successful, in both the cover-up and when soldiers without uniforms marched into the Crimea and announced that they weren’t invading—there was maybe some kind of civil war starting or they were just there to protect people. Quite a lot of people in the Western world were confused. I was in London at the time and I went repeatedly on television and the radio saying, “This is a Russian invasion force.” And people said, “How do you know? They’re not wearing uniforms. Maybe they’re Ukrainian separatists.” And for a good few weeks there was a lot of confusion, and they were very successful in portraying this invasion as a non-invasion. The other thing they were successful at doing was smearing the Ukrainians. “They’re Nazis. They’re far right. They’re ethnic nationalists.” And there was a certain purchase for that view in mainstream circles in London and Washington and elsewhere.

Axelrod: What about it creating fissures between eastern and western Ukraine?

Applebaum: Russia’s been trying to do that for two decades. The point of Russian propaganda inside Ukraine was to create and amplify these divisions, whether they were over language or whether they were over interpretations of history and different ways of seeing the world. That has been their modus operandi there. And one of the effects of 2014 and the last two Ukrainian presidencies has really been to bring a lot of that to an end. And I think their invasion now is partly a recognition that the information war failed in Ukraine, and Ukraine was, partly thanks to them, unifying and rebuilding its state and rebuilding its army. It was not so easy to divide using slander and political games as it had been before.

Axelrod: So let’s go back to your journey. So you began paying a lot of attention to this all over Europe.

Applebaum: So my colleague Peter Pomerantsev and I started a think-tank program, and then we moved it to the London School of Economics, and it’s now at Johns Hopkins. Originally we were investigating: How does Russian propaganda work? And we tried to track it in different ways. We worked with a lot of other different groups who were doing this. We tried to track it. We tried to understand it. We did a couple of publications on the Swedish elections, on German elections. We also looked at various solutions, checking and so on. One of the things that we pretty quickly learned was that fact-checking doesn’t work, because you have to trust the fact-checker. And if people don’t trust the fact-checker, then they don’t believe the fact-checker either. And so, so much of what we were talking about, in fact, was about creating communities of trust. How do you build those for people?

Axelrod: And that’s exactly what Maria was talking about in the project that she’s working on in the Philippines.

Applebaum: Exactly. So how do you create communities of trust? Also, how do you get to the underlying problems of division and polarization? How can you heal polarization? How can you bring people together? Because if you want people to believe something that’s true, you almost have to build a community around it. One of the other effects—and this isn’t just about the internet, and this is beautifully described by Jonathan Rauch in his recent book called The Constitution of Knowledge—is that we’ve had a decline in belief in truth and facts and science more generally. It’s not just in politics. Part of that is to do with the fact that we never really think very consciously about why it is that we think things are true. But there’s a whole ecosystem—here at universities, there’s a scientific method and there are peer-reviewed papers and there are arguments among colleagues. And there is a conversation that leads people to conclude that something is true and you arrive at it through a series of institutions. That’s true in journalism. It’s true in academia. It’s true in government. You have ombudsmen and inspectors and so on, and you reach conclusions based on these networks. If you disrupt those networks and make people feel no sense of faith in those networks—they hate universities, they hate journalists, they hate the government, they hate bureaucrats—then you suddenly find that what is true and what is not true is disputed. And so part of the solution to this problem, and I think there are some regulatory solutions, but there’s also a problem of re-creating communities of trust, and this may be something that both media and philanthropy can think about.

Axelrod: I want to get to solutions, but I want to continue on your journey. Part of that journey, once you formed this think tank and once you started doing these studies, took you here to the U.S. You met with policy makers within the government in 2016, and you tried to call their attention to—

Applebaum: This was slightly earlier, in 2015. So we did a big investigation into Russian disinformation, mostly in Central and Eastern Europe. And we pulled together all the existing evidence at that time. We put it into a report by Peter Pomerantsev and Edward Lucas, another colleague. Then we took the report to Washington and we took it around the Hill and we went to the State Department and elsewhere. We showed how it was working and how it was influencing politics. And the reaction we got—and remember, this was April 2015—was, “Well, this is all very upsetting and distressing, and it’s really too bad for Slovakia that they have this terrible problem. Maybe we’ll think of a State Department project that can help the Slovaks.”

Axelrod: This goes to your point, Maria, that Americans tend to think they’re immune.

Applebaum: Even I thought Americans would be immune. And as the 2016 election unfolded, I watched my jaw dropping as I saw slogans that I knew had originated in the Russian media appearing in the U.S. election campaign.

Axelrod: Talk a little bit more about that. What did you see in 2016 that you thought was symptomatic of what you knew were patterns of behavior?

Applebaum: There were several things. One of them was, there were these slogans. “Hillary Clinton will start WWIII.” “Obama created ISIS.” These are slogans and ideas that originally ran in the Russian press, and then Trump would use them in his campaign speeches. And so I could see this direct connection there. But there were also tactics that looked familiar to me. So this leak of not-very-interesting people’s emails from the Democratic National Committee. And then the spinning of those emails into a million different conspiracy theories. As nobody probably remembers now, Pizzagate was one of those. Pizzagate came out of the emails because some of the emails referred to meetings at a pizza restaurant.

Axelrod: This was that story about a pedophilia ring, right?

Applebaum: And “cp,” “cheese pizza,” is meant to be a symbol for “child pornography.” And so people read into the emails this story about child pornography at this particular pizza restaurant in Washington. And, as we know, at the height of the madness a poor guy from North Carolina drove up to Washington with a shotgun and came to the restaurant to free the children who were in the basement. And then he discovered there was no basement.

Axelrod: Well, remind me to order sausage next time.

Applebaum: But the technique: Create this idea that there is something secret that has been revealed and then spin off. There was a kind of Catholic conspiracy theory that came out of that. There were a whole bunch of things. This is something I’d seen before. This happened in Poland in 2014, 2015 as well. So I knew somebody was learning something from someone.

Axelrod: I should ask you: Your husband was the foreign minister in Poland and is still a member of European Parliament. What was his experience like as a member of government?

Applebaum: Well, mostly his experience was very good, but he was famously taped at a restaurant along with other people. And these tapes of conversations were released, and in none of the conversations was any crime committed. There was nothing illegal in any of them. But the off-the-record commentary was—again, it was exactly the same thing. It was twisted into complex conspiracy theories. And the tapes were made by somebody who had connections to the Russian coal industry.

Axelrod: And as you pointed out, you did studies in a number of different countries. What were the threads of commonality that you saw between them?

Applebaum: When you look at the Russian efforts in political campaigns, they’re often very similar. In some European countries, they support both the far left and the far right. And to some extent, they may do that here, too. It was a combination of funding for far-right parties, and sometimes particular people or the creation of business opportunities for them. One of the funders of the Brexit campaign, for example, in the U.K., had a business opportunity created for him by the Russians. That doesn’t mean he was bribed and he’s not a KGB agent, but these opportunities would be presented to you. And this clearly happened in the circles around Matteo Salvini, in Italy and other places. And then the disinformation tactics would often be about getting people to focus on whatever it was that made them afraid. So fear of immigration, fear of Muslim terrorism, which was very important in 2015–16 in Europe. Fear of immigration, even in countries that didn’t have any immigrants. One of the countries where this was done most successfully—and this was not Russia; this was its own thing—was in Hungary. And Hungary is not a country with very many Muslim immigrants, but the fear that they were coming and this narrative that we need to protect Hungarians from this outside threat was extremely effective. It was effective in other places, too, focusing on that and also looking for ways to divide people over gender and LGBT culture wars. This is something that the Russians have also consistently done inside Russia, but also in other countries.

There was a very brilliant study of Russian television, around about 2016, ’17, ’18, which looked at how Europe was portrayed on Russian TV. And the study found, to make a long story short, that there were lots and lots of news articles about Europe on the three main Russian channels, and they were all negative—maybe one or two exceptions. Almost invariably the implication was: Europe is degenerate, and crazy things are happening. In Germany, gay couples are allowed to take children away from straight couples. Gay couples have more rights than straight people. Sometimes these things would be based on real incidents, sometimes not. But always the distortion was that there’s a moral threat coming from Europe. But this is exactly the same kind of language that was used inside Germany or France—that there’s a moral threat coming. It’s an existential threat to your way of life. And it’s connected to the institutions of your country and of your democracy, and so what you need to do is resist those institutions.

Axelrod: Are you talking about Germany and France in the 20th century, or currently?

Applebaum: This is current. Another tactic that’s very important to understand is the conspiracy theory. Sometimes they work; sometimes they don’t. Usually they work because they play on something deeper and something real that people do fear. And so in this country, a very, very successful conspiracy theory was that President Barack Obama was born in Kenya, and therefore he’s not American, and therefore he’s an illegitimate president.

Axelrod: I have some recollection of that.

Applebaum: I didn’t take it seriously. “Okay, some crazy people believe this.” But up to 30 percent of Americans did believe it at some point or another. And if you do believe that, think about what it means. It means our president is illegitimate. And that means that the White House and Congress and the FBI and the CIA and the media are all lying to us. The institutions of the state have made someone illegitimate into the president, and that means that the whole system is rotten. The whole country is falling apart. It’s not what it’s meant to be. It’s been taken over by enemies, and it’s not real or it doesn’t represent us anymore. And it was actually a very powerful and important moment of change, I think, in the American public. There’s a very similar version of this that happened in Poland. It was a set of conspiracy theories around a plane crash that killed one of the presidents. And for similar reasons, it had a very deep echo. People in Poland were spooked by it. The crash happened in Russia. Had the Russians plotted it? Was there a cover-up? And this was not just that people thought something crazy had happened, but it undermined faith in the whole system. So if you find the right conspiracy theory and if it has enough purchase and it echoes enough with things that people really are afraid of, then you can undermine people’s trust in democracy. And this is a tactic that the Russians have used over and over and over again.

Axelrod: In that sense, the same dynamic that causes this to be a profitable strategy for social-media platforms makes it a profitable strategy for political insurgency.

Applebaum: Conspiracy theories spread because what the good ones do—

Axelrod: A good conspiracy theory.

Applebaum: Successful ones. Effective ones. What they do is they seem to provide an explanation for mysterious facts or things that seem strange to people. And so they function as a story that gives people the sensation Now I understand it, this thing that was bothering me, that I couldn’t understand. You know: How did a Black person with a strange name become president of the United States? That makes no sense. Here’s the explanation: “He’s not.” Or: How did this plane crash kill the president of Poland? It couldn’t have been an accident. Here’s the explanation: “There was a secret plot.” And people find it very satisfying, and they click on it and they pass it on, and, as Maria has just brilliantly described, the architecture of Facebook is such that the things that make people angry or afraid are the things that move fastest.

Axelrod: Well, let’s talk about solutions. You started down that road, and Maria was very specific about her ideas about what we needed to do. You’ve done a series of studies. Your think-tank arena has done a series of studies. In each study, you offer prescriptions that suggest themselves from the research that you’ve done. Just, first of all, briefly say how you do that research. And talk about the solutions that you think are most important.

Applebaum: So the research is always a group of partners, people who study the internet. We usually try to bring together different groups. Sometimes we do a lot of polling, trying to understand divisions. Sometimes we use people who can follow Twitter bots across the internet. We’ve tried different kinds of things. So they’re usually projects that bring people together. This is, I should stipulate, fairly small-scale. There are other people who do these things that are much bigger-scale than we do.

The solutions, I think, go in two or three categories. I think there is a regulatory solution. We’re not there yet, in that our Congress is not yet prepared to talk about it. Our lawmakers aren’t yet there. One or two of them are, but not as a group. And this would be the regulation not of content—we’re not going to create a ministry of information that reads every Facebook page and takes things down. But it would be a way of regulating the algorithms. Opening up the black box, looking at what they are and how they work, and giving some people outside of the companies ways to understand that. And if you think that’s impossible because the platforms are too powerful, remember that once upon a time it was considered impossible to regulate the food industry. The food industry was only eventually regulated because socially concerned scientists began doing experiments, measuring food, and then they began publishing the results of their work. And in some cases they created newsletters or things focused on consumers. They raised public awareness, and now we have food regulation, which we all think is totally normal. And it wouldn’t occur to us that we shouldn’t have it. And so we’re still at that phase. We could get there. It’s not technologically impossible. And there are computer scientists who are working on this in some universities.

The second solution that is worth thinking about would be putting a lot of thought into what a public-interest social-media project would look like. And by this I don’t mean the BBC website. If you were to have online conversations that were good and useful and fruitful, how would they be designed? What algorithms would they be based on and what would they seek to do? And this is also a huge area of research and experimentation, and there are many examples, in fact, of people who’ve tried versions of this. The Taiwanese are very interested in this, for obvious reasons. The Taiwanese are very interested in democracy and how to protect it. For example, there’s a platform called Polis, where you can essentially hold an online debate, except that instead of everybody shouting at each other and being angry, it sorts people into groups, so that you become clear where the areas of consensus are. So could you create consensus online? Could you find other ways of talking online? Could you create a social media that has slightly different rules, in the ways that you have rules for a town hall or rules for Wikipedia, which is a great example, actually? Could you have an online conversation that had rules? Maybe the rules would be that no one can be anonymous. Everybody has to be identifiable as a real person. So there are no bots. Maybe some of the rules are that when you post things, you have to wait six hours before it appears, so that when you are really mad and you post something, you have three hours to think about it and take it back.

There’s a thing called frontporchforum.com in Vermont, which works a little bit like this. There are a few other things. And then the challenge would be finding ways to finance it. Finding ways to persuade people to communicate on that and not on Facebook. But it’s not impossible, and this is, again, a great area of really interesting research.

Axelrod: You mentioned Wikipedia significantly. It isn’t supported by advertising. And this is enormously lucrative. So I don’t have a lot of faith that the platforms are going to regulate themselves.

Applebaum: No, they will not. They could. They know a lot about what spreads and what doesn’t spread. Facebook has even told us that after January 6, they were much more cautious about what kinds of things they allowed to go forward and how information was spread. And when I heard that they’d done that, I thought, Well, if they could do it at that moment, why didn’t they do it before? So no, they are not going to regulate themselves. They don’t have any interest in regulating themselves. Appealing to them to be nicer or more public-spirited seems quite pointless to me. And that’s why I hope eventually we could get to a conversation about some kind of regulation, because it seems to me that there is a bipartisan interest in this. The political scientist Francis Fukuyama, I’ve had this conversation with him a couple of times, and he does think it’s impossible. And so he has another idea, which is that at least we could create something called middleware, which would at least allow us to choose our algorithms, so we would have some control over what kind of information we see. And that’s another kind of technical solution.

Editor’s Note: This article is part of our coverage of Disinformation and the Erosion of Democracy, a conference jointly hosted by The Atlantic and the University of Chicago Institute of Politics. Learn more and watch sessions here.